- Looker

- Articles & Information

- Technical Tips & Tricks

- Recommendations and Pitfalls for Looker in Kuberne...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Article History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Looker will not be updating this content, nor guarantees that everything is up-to-date.

Overview

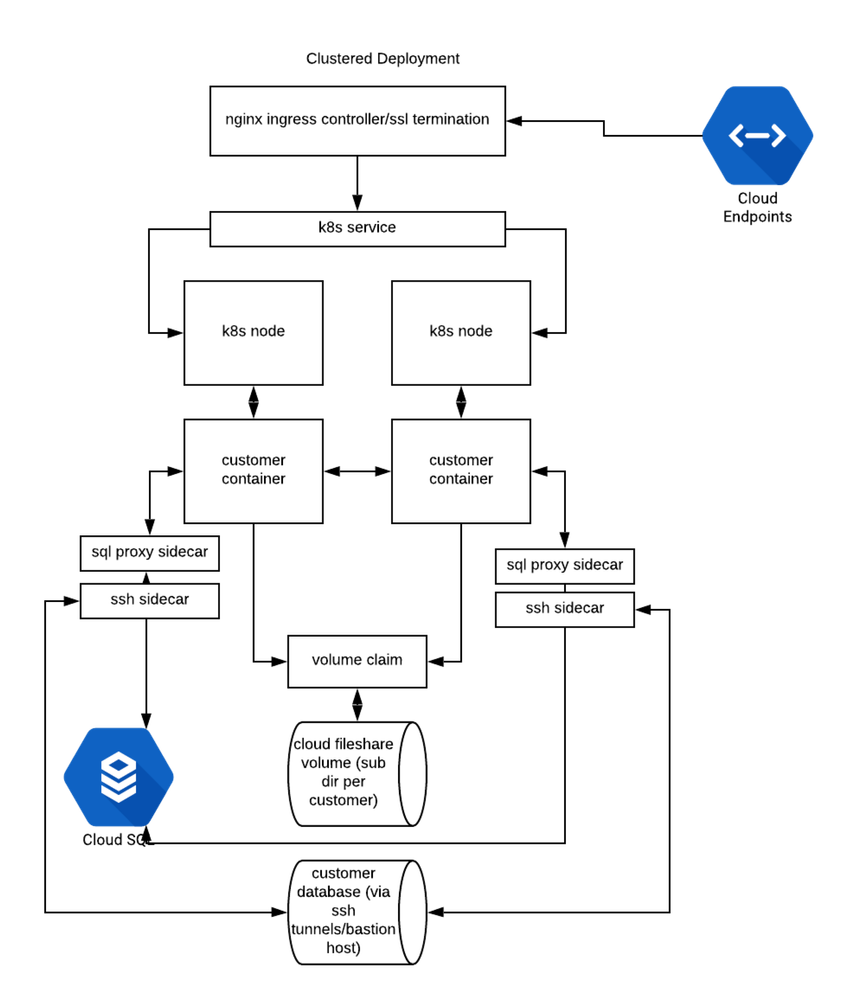

Deploying the Looker application usually involves database servers, load balancers, and multiple servers running the Looker application itself, as well as other ancillary software. Looker is packaged as a monolithic application distributed in a JAR file. Each node in a Looker cluster is a running instance of Looker on either a Linux server or a container.

Required components

The following components are required for a clustered Looker deployment:

- GKE

- Google Kubernetes Engine, managed Kubernetes service (it is possible, but not recommended, to run your own clusters)

- MySQL

- Relational database system used by Looker as its "internal" database

- Shared file system

- Shared POSIX-compliant multi access block device that all Looker cluster nodes store model data on

- Containerized version of the Looker application JAR files

- Containers that contain the Looker application and other required components and libraries

Google Kubernetes Engine (GKE)

Looker leverages modern containerized environments to build a robust and easily managed platform. We rely on managed Kubernetes solutions from the major cloud providers (GCP, AWS, Azure) as the core for our container orchestration system.

Standard minimal GKE configuration:

- Regional private Kubernetes clusters with a minimum of one node per zone (three nodes total at a minimum).

- Google Cloud Filestore file-sharing service is used as the shared multi-access block device for Looker cluster members.

- Google's container registry, container hosting.

- Google's cloud builder, container building and scanning.

- Kubernetes network security policies (Calico network overlay).

- Minimum sizes (dependent on usage patterns; starting points only).

- Looker nodes: 2CPUs, 8 GB of RAM

- MySQL : 1CPU, 3.75 GB of RAM, 10 GB of SSD

- Suggest enabling autoscaling of disk

- Google Cloud Filestore: 1 TB (current minimum allowed by GCP)

MySQL application database

Looker uses a transactional database (often called an internal database) to hold application data. In a clustered Looker configuration, each cluster node must use a common database.

- Only MySQL is supported for the application database for clustered Looker instances.

- Cloud SQL is used for Looker's internal database. High availability versions of the database service are recommended.

- Google's second-generation high availability or primary/secondary replication is recommended.

- MySQL 5.7 is supported.

- Google's SQL proxy is used to provide encrypted transport for MySQL.

- Database should be backed up at least daily.

- Database should be backed up before any cluster maintenance.

Looker cluster nodes

Each cluster node is a server or container running the Looker Java process. Network connectivity between the nodes is required for cluster communications.

- Ubuntu 18 is used for the base OS.

- Extra resources (memory/CPU) should be available for Chromium PDF rendering on the containers beyond what Looker requires. This amount varies and is based on customer workloads.

Ingress controller

The NGINX ingress controller is used to route incoming requests to cluster nodes and to terminate SSL. Use of the Google Cloud Load Balancer (at Layer 7) is not recommended at this time.

- NGINX Ingress Controller running in the cluster.

- SSL termination is performed at the NGINX layer.

- Layer 7 routing has been implemented at the NGINX layer to route traffic correctly between Looker ports.

- Correct proxy configurations to ensure that query killing subsystem works as expected.

- Read timeout should be at least 3,600 seconds.

- This value is used to determine the duration a query is killed.

- Maximum request body size must be at least 100 MB.

- Read timeout should be at least 3,600 seconds.

Shared file system

- POSIX-compliant shared file system such as NFS (v3 or v4).

- Shared data between cluster nodes.

- Minimum suggested size is 1 TB (current minimum size in GCP).

Containers

Containers with the Looker application and associated components

- Ubuntu 18 for base container OS.

- Looker application JAR, Looker 7.0 or above.

- Google Chrome

- Latest stable version.

- Must be linked as

/usr/local/bin/chromium.

- Additional database adapters (JDBC DB connectors) need to be included in container builds.

Setting up the cluster

Follow the clustering setup guide on the Clustering Looker documentation page. This guide is not Kubernetes specific. Components that must exist for the cluster to function are:

- MySQL database

- Looker containers

- Shared file system

- Kubernetes components (Looker service, ingress, Looker deployment, replica sets, etc.)

Kubernetes specifics

Specific Kubernetes technologies:

- Secrets mounted as files can be used to share configuration data across Looker instances.

- Deployment objects are used to define Looker pods, replica sets, etc.

- Ingress objects control the NGINX ingress controllers.

- A Kubernetes service is used to balance traffic across pods.

- SSL certificates and keys are stored as Kubernetes secrets for ingress.

- Replica sets used to control scaling.

Networking

Networking requirements for clustered Lookers:

- Egress from cluster

- Git repository (port 22/SSH)

- External mail relay (if sendgrid port 587 SMTP/TLS)

- Zopim (port 80/443 for chat support)

- All customer database direct connections

- SSH for database connection tunneling (deployment/configuration dependent)

- Kubernetes network policy for cluster nodes

- Destination TCP ports 1551 and 61616 at a minimum between nodes

- Ports 9999 and 19999 from Kubernetes service layer

- Ideally, all Kubernetes ports should be open between cluster nodes.

Updating a cluster to a new Looker release

Updates may involve schema changes to Looker's internal database that would not be compatible with previous versions of Looker. Standard Looker update procedure:

- Database snapshots should be taken before any version changes/cluster restarts in case of database corruption.

- When changing Looker versions, cluster must be scaled down to a single node, updated, and only scaled back up once that node is ready.

- Scale cluster down to a single node.

- Update Looker deployment object to use new version of Looker.

- Ideally this would be a new container built with the new version of Looker.

- Allow new Looker node to complete startup.

- The

/availabilityendpoint can be used to determine whether or not Looker is ready to service traffic.

- The

- Scale Looker cluster back up to required number of nodes.

Additional notes

- The

/availabilityendpoint is used for both liveness probes and readiness probes. - All data that is not ephemeral must be stored on the shared block device. No logging data (or data similar to this) should be stored on the cluster nodes themselves.

- Looker is configured to only log to STDOUT, STDERR allowing for log capturing (

--no-daemonize,--no-log-to-file). - Google Stackdriver can be utilized for log capture and analysis.

- Ensure that structured logging/JSON logging is enabled in Looker (

--log-format=json). - Long delays for liveness/readiness probes are used to create time for Looker startup.

- Set maintenance windows for all Google services that are in use.

- Ensure that hostname (as passed to Looker for clustering args) is resolvable inside Kubernetes cluster. If not, you will need to get the IP address of the container (for example,

IPSocket.getaddress(Socket.gethostname)). - Static IP addresses are used for cluster ingress and egress (cloud NAT and ingress).

- HTTPS is used between NGINX and the Looker instances themselves via a self-signed certificate.

- ALL deployments should start with a single node (replica) then be scaled out.

- Dynamic scaling is not recommended (especially scaling down). This potentially can cause database corruption or an issue with scheduled jobs being aborted.

- Sidecars that create SSH tunnels are used when access to the external databases is required.

- Note: this is generally only done when TLS is not available for database transport.

Example configs

Ingress

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

annotations:

kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/backend-protocol: HTTPS

nginx.ingress.kubernetes.io/proxy-body-size: 100m

nginx.ingress.kubernetes.io/proxy-buffer-size: 128k

nginx.ingress.kubernetes.io/proxy-buffering: "on"

nginx.ingress.kubernetes.io/proxy-buffers-number: "4"

nginx.ingress.kubernetes.io/proxy-read-timeout: "3600"

nginx.ingress.kubernetes.io/ssl-redirect: "true"

labels:

hostname: example

name: example

namespace: looker

spec:

rules:

- host: example.some.fqdn

http:

paths:

- backend:

serviceName: example

servicePort: 9999

path: /

- backend:

serviceName: example

servicePort: 9999

path: /api/internal/

- backend:

serviceName: example

servicePort: 19999

path: /versions

- backend:

serviceName: example

servicePort: 19999

path: /api/

- backend:

serviceName: example

servicePort: 19999

path: /api-docs/

tls:

- hosts:

- example.some.fqdn

secretName: secret-containing-sll-cert-and-key

Twitter

Twitter