- Looker

- Looker Forums

- Modeling

- Using Amazon Redshift’s new Spectrum Feature

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

At the AWS Summit on Wednesday, April 19th, 2017, Amazon announced a new Redshift feature called Spectrum. You can take full advantage of Redshift Spectrum’s amazing performance from within Looker.

Spectrum significantly extends the functionality and ease of use of Redshift by letting users access data stored in S3 without having to load it into Redshift first. You can even join S3 data to data stored in Redshift, and the Redshift optimizer will take care of maximizing your query performance, optimizing both the S3 and Redshift portions of your query.

Prior to Spectrum, you were limited to the storage and compute resources that had been dedicated to your Redshift cluster. Spectrum now provides federated queries for all of your data stored in S3 and allocates the necessary resources based on the size of the query. These resources are not tied to your Redshift cluster, but are dynamically allocated by AWS based on the requirements of your query. And, perhaps most importantly, taking advantage of the new Spectrum feature is a seamless experience for end-users.

Pricing for data stored in and queried against your existing, relational Redshift cluster will not change. Queries against data that is stored in S3 will be charged on a per-query basis. As I write this, the cost is $5 per terabyte.

In order to use Spectrum, you’ll need to be sure that you are running at least version 1.0.1294 of Redshift. To verify the version of Redshift that you are using you can run this command from psql:

select version();

Looker release 4.14 offers full support for Redshift Spectrum. Earlier versions of Looker can also support Spectrum, but some manual steps are required. If you are interested in running Spectrum on an older version of Looker please contact your Customer Success Manager for the details.

Currently, Redshift is only able to access S3 data that is in the same region as the Redshift cluster.

In order for Redshift to access the data in S3, you’ll need to complete the following steps:

1. Create an IAM Role for Amazon Redshift.

2. Associate the IAM Role with your cluster.

3. Create an External Schema.

4. Create some external tables.

5. Query your tables.

Details of all of these steps can be found in Amazon’s article “Getting Started With Amazon Redshift Spectrum”.

Creating an external schema requires that you have an existing Hive Metastore (if you were using EMR, for instance) or an Athena Data Catalog. Creating an Athena Data Catalog is easy to do and is free to create. For more information on getting started with Athena, please refer to Amazon’s article “What is Amazon Athena”. The database specified in your “create external schema” statement must already exist in Athena or Hive.

To create an external schema using the IAM role created in step 1 above, run the following commands from psql:

create external schema if not exists s3

from data catalog database 'default' region 'us-west-2'

iam_role 'arn:aws:iam::1111222233334444:role/my-redshift-role';

GRANT USAGE on SCHEMA s3 to <username that will be connecting>;

To create an external table in the newly created external schema, run the following command from psql:

CREATE EXTERNAL TABLE inventory_items (

id int,

product_id int,

created_at timestamp,

sold_at timestamp,

cost double,

product_category string,

product_name string,

product_brand string,

product_retail_price double,

product_department string,

product_sku string,

product_distribution_center_id int

)

ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' STORED AS TEXTFILE

LOCATION 's3://my-test-data/inventory_items';

To query data from the newly created external table, you’ll need to specify the name of the external schema when referencing the table. From psql or the Looker SQL Runner utility, run the following command:

SELECT product_name, product_category, product_brand from s3.inventory_items;

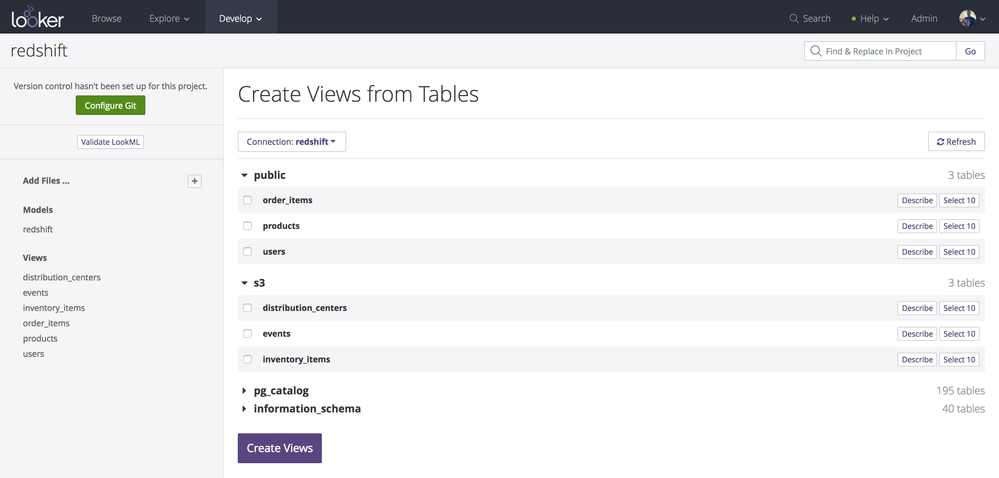

To access the Spectrum tables from within Looker just access the “Create Views from Tables” option (as you normally would) and look for the name of your external schema:

You can now access S3 data using Redshift Spectrum. This includes joining S3 data to data stored in Redshift itself with the Redshift optimizer maximizing both parts of the query.

References

Amazon Redshift - Cloud Data Warehouse - Amazon Web Services

Learn about Amazon Redshift cloud data warehouse. Amazon Redshift is a fast, simple, cost-effective data warehousing service. Amazon Redshift gives you the best of high performance data warehouses with the unlimited flexibility and scalability of...

http://docs.aws.amazon.com/redshift/latest/dg/c-getting-started-using-spectrum.html

http://docs.aws.amazon.com/athena/latest/ug/what-is.html

- Labels:

-

redshift

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So so good, this makes half our ETL process redundant. My only worry is the bill!

-

access grant

6 -

actionhub

1 -

Actions

8 -

Admin

7 -

Analytics Block

27 -

API

25 -

Authentication

2 -

bestpractice

7 -

BigQuery

69 -

blocks

11 -

Bug

60 -

cache

7 -

case

12 -

Certification

2 -

chart

1 -

cohort

5 -

connection

14 -

connection database

4 -

content access

2 -

content-validator

5 -

count

5 -

custom dimension

5 -

custom field

11 -

custom measure

13 -

customdimension

8 -

Customizing LookML

118 -

Dashboards

144 -

Data

7 -

Data Sources

3 -

data tab

1 -

Database

13 -

datagroup

5 -

date-formatting

12 -

dates

16 -

derivedtable

51 -

develop

4 -

development

7 -

dialect

2 -

dimension

46 -

done

9 -

download

5 -

downloading

1 -

drilling

28 -

dynamic

17 -

embed

5 -

Errors

16 -

etl

2 -

explore

58 -

Explores

5 -

extends

17 -

Extensions

9 -

feature-requests

6 -

filter

220 -

formatting

13 -

git

19 -

googlesheets

2 -

graph

1 -

group by

7 -

Hiring

2 -

html

19 -

ide

1 -

imported project

8 -

Integrations

1 -

internal db

2 -

javascript

2 -

join

16 -

json

7 -

label

6 -

link

17 -

links

8 -

liquid

154 -

Looker Studio Pro

1 -

looker_sdk

1 -

LookerStudio

3 -

lookml

859 -

lookml dashboard

20 -

LookML Foundations

54 -

looks

33 -

manage projects

1 -

map

14 -

map_layer

6 -

Marketplace

2 -

measure

22 -

merge

7 -

model

7 -

modeling

26 -

multiple select

2 -

mysql

3 -

nativederivedtable

9 -

ndt

6 -

Optimizing Performance

30 -

parameter

70 -

pdt

35 -

performance

11 -

periodoverperiod

16 -

persistence

2 -

pivot

3 -

postgresql

2 -

Projects

7 -

python

2 -

Query

3 -

quickstart

5 -

ReactJS

1 -

redshift

10 -

release

18 -

rendering

3 -

Reporting

2 -

schedule

5 -

schedule delivery

1 -

sdk

5 -

singlevalue

1 -

snowflake

16 -

sql

222 -

system activity

3 -

table chart

1 -

tablecalcs

53 -

tests

7 -

time

8 -

time zone

4 -

totals

7 -

user access management

3 -

user-attributes

9 -

value_format

5 -

view

24 -

Views

5 -

visualizations

166 -

watch

1 -

webhook

1 -

日本語

3

- « Previous

- Next »

Twitter

Twitter