- Looker

- Looker Forums

- Modeling

- Update(write-back) data on BigQuery from Looks?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is there a way to let users update data on DB right from looks?

I read about actions here but I found it very abstract.

Can someone please explain the process for this?

Thanks,

Krish.

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Krishna,

I’ve been able to write data from Looker to BigQuery using both Data Actions as well as the Looker Action Hub. In either case, you’ll need to push data from Looker to some middleware that will interpret the webhook from Looker and perform the necessary operations to then stream the data to BigQuery.

Luckily, Google has a great service called Google Cloud Functions that makes this really easy. Like AWS’s Lambda, Cloud Functions let you deploy code that gets executed based off of some event. With a data action, you can push JSON containing data from Looker as well as user-defined form parameters to a Cloud Function endpoint. The Cloud Function then parses the JSON, extracts the relevant values, and calls on the BigQuery SDK to stream the results to BigQuery.

Here’s a quick overview of how to use Cloud Functions to stream data from Looker to BigQuery. In this example, we’ll create a data action and cloud function that lets an end user persist an annotation to BigQuery:

Create the Data Action

In this example, we’re going to attach a data action to field, and allow end-users to mark whether or not a name is a cool name.

dimension: name {

type: string

sql: ${TABLE}.name ;;

action: {

label: "Cool Name?"

url: ""

param: {

name: "name"

value: "{{ value }}"

}

form_param: {

name: "annotation"

type: select

label: "Cool name?"

default: "No"

description: "Do you think that this name is a cool name?"

option: {

name: "No"

}

option: {

name: "Yes"

}

}

}

}

Note: We’re going to leave the url blank for now. Once we’ve spun up the cloud function we’ll paste the endpoint in.

Configure the Cloud Function

- Follow the first three steps here to select your GCP project and enable API access for Cloud Functions and BigQuery.

- Navigate to https://console.cloud.google.com/functions and ensure that you’ve selected the same project in which BigQuery resides.

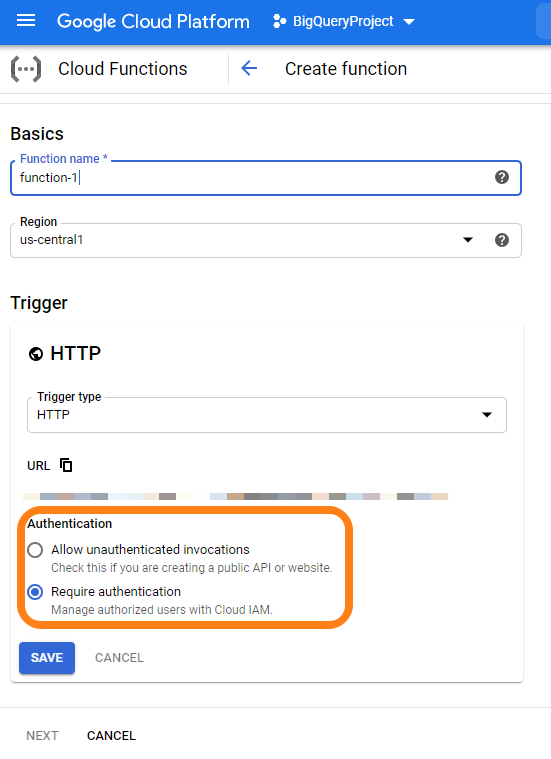

- Click Create Function, and give it a name. Select a memory allocation (in my experience, you can select the minimum for this type of operation).

- Select HTTP as your trigger

- Select your preferred runtime (for this example, I will use Python 3.7, but versions of Node.js are also supported).

Creating your Cloud Function

We’re now going to write a simple Python function that writes the user selected annotation to BigQuery, and place it in main.py

import google.cloud.bigquery as bigquery

import datetime

import time

def annotation(request):

r = request.get_json() # Fetch the data action JSON

client = bigquery.Client()

dataset_id = '' # Replace with name of the BQ dataset

table_id = '' # replace with your table ID

table_ref = client.dataset(dataset_id).table(table_id)

table = client.get_table(table_ref) # API request

# request variables

name = r['data']['name']

annotation = r['form_params']['annotation']

# system variables

sys_time = int(time.time())

row_to_insert = [

(

name,

annotation,

datetime.datetime.fromtimestamp(sys_time).strftime('%Y-%m-%d %H:%M:%S')

)

]

row = client.insert_rows(table, row_to_insert) # API request to insert row

return '{"looker": {"success": true,"refresh_query": true}}' # return success response to Looker

Additional things to configure:

- The ‘Function to Execute’ is annotation

- Make sure to include a line for

google-cloud-bigquery==1.5.0inrequirements.txt - Click the ‘Trigger’ tab and copy the URL. Paste this in the action that you setup in the first step.

The End Result:

Caveats:

- Right now, this function is open to the internet, so anyone with your Cloud Function URL can write data to your BigQuery instance. Consider adding in some checks/validation that the request is coming from Looker.

- For a more comprehensive and secure approach, consider adapting this using the Action Hub framework. I can provide more detail on this if you’re interested.

Cheers!

Jesse

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Krishna,

I’ve been able to write data from Looker to BigQuery using both Data Actions as well as the Looker Action Hub. In either case, you’ll need to push data from Looker to some middleware that will interpret the webhook from Looker and perform the necessary operations to then stream the data to BigQuery.

Luckily, Google has a great service called Google Cloud Functions that makes this really easy. Like AWS’s Lambda, Cloud Functions let you deploy code that gets executed based off of some event. With a data action, you can push JSON containing data from Looker as well as user-defined form parameters to a Cloud Function endpoint. The Cloud Function then parses the JSON, extracts the relevant values, and calls on the BigQuery SDK to stream the results to BigQuery.

Here’s a quick overview of how to use Cloud Functions to stream data from Looker to BigQuery. In this example, we’ll create a data action and cloud function that lets an end user persist an annotation to BigQuery:

Create the Data Action

In this example, we’re going to attach a data action to field, and allow end-users to mark whether or not a name is a cool name.

dimension: name {

type: string

sql: ${TABLE}.name ;;

action: {

label: "Cool Name?"

url: ""

param: {

name: "name"

value: "{{ value }}"

}

form_param: {

name: "annotation"

type: select

label: "Cool name?"

default: "No"

description: "Do you think that this name is a cool name?"

option: {

name: "No"

}

option: {

name: "Yes"

}

}

}

}

Note: We’re going to leave the url blank for now. Once we’ve spun up the cloud function we’ll paste the endpoint in.

Configure the Cloud Function

- Follow the first three steps here to select your GCP project and enable API access for Cloud Functions and BigQuery.

- Navigate to https://console.cloud.google.com/functions and ensure that you’ve selected the same project in which BigQuery resides.

- Click Create Function, and give it a name. Select a memory allocation (in my experience, you can select the minimum for this type of operation).

- Select HTTP as your trigger

- Select your preferred runtime (for this example, I will use Python 3.7, but versions of Node.js are also supported).

Creating your Cloud Function

We’re now going to write a simple Python function that writes the user selected annotation to BigQuery, and place it in main.py

import google.cloud.bigquery as bigquery

import datetime

import time

def annotation(request):

r = request.get_json() # Fetch the data action JSON

client = bigquery.Client()

dataset_id = '' # Replace with name of the BQ dataset

table_id = '' # replace with your table ID

table_ref = client.dataset(dataset_id).table(table_id)

table = client.get_table(table_ref) # API request

# request variables

name = r['data']['name']

annotation = r['form_params']['annotation']

# system variables

sys_time = int(time.time())

row_to_insert = [

(

name,

annotation,

datetime.datetime.fromtimestamp(sys_time).strftime('%Y-%m-%d %H:%M:%S')

)

]

row = client.insert_rows(table, row_to_insert) # API request to insert row

return '{"looker": {"success": true,"refresh_query": true}}' # return success response to Looker

Additional things to configure:

- The ‘Function to Execute’ is annotation

- Make sure to include a line for

google-cloud-bigquery==1.5.0inrequirements.txt - Click the ‘Trigger’ tab and copy the URL. Paste this in the action that you setup in the first step.

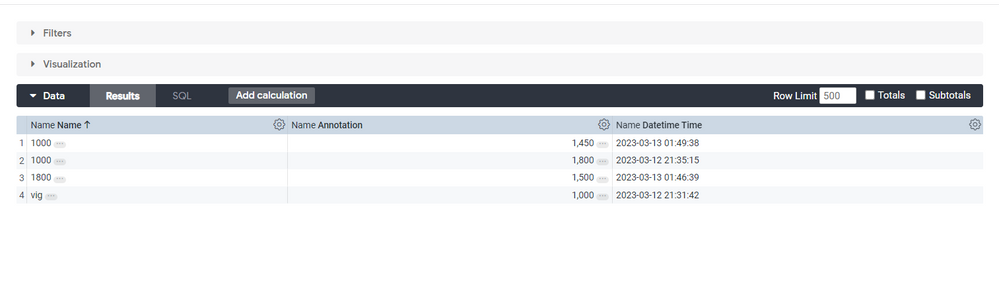

The End Result:

Caveats:

- Right now, this function is open to the internet, so anyone with your Cloud Function URL can write data to your BigQuery instance. Consider adding in some checks/validation that the request is coming from Looker.

- For a more comprehensive and secure approach, consider adapting this using the Action Hub framework. I can provide more detail on this if you’re interested.

Cheers!

Jesse

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you so much Jesse!

Going to try this now!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yeah, let me know how it goes! Happy to discuss further.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is it possible to push all the data this way into BigQuery? This would be super useful to create custom segments of users for example.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Jesse!

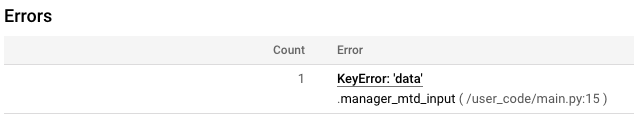

I followed the exact process, but I am seeing an error on Cloud Functions:

NameError: name ‘bigquery’ is not defined

I included google-cloud-bigquery==1.5.0 in requirements.txt but still am seeing the same error.

EDIT:

Fixed this by adding “from google.cloud import bigquery”

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Krish, good catch. It looks like I didn’t copy over the first line of the cloud function when I pasted it in. Did you get the action to work?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dimitri – yes, that is totally possible, but we’ll need to leverage the Action Hub framework to push all results to BigQuery. I’ll take that as a challenge and try to get you a working example sometime next week.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

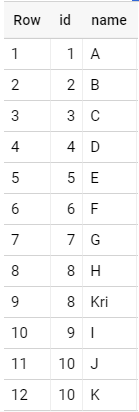

Hey Jesse,

Yes I finally made it work. But there is one issue (which I am trying to fix).

Data is being duplicated whenever I try to update. Any idea why that’s happening?

I tried updating rows with ids 8 and 10, and you can see data being duplicated

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Amazing, thank you @jesse_carah! Looking forward to it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Krish,

Check out this discussion about the append-only nature of BigQuery.

I think the best move here is to have your Cloud Function insert a timestamp when each record is created, and create a view that selects only the most recent record. One approach to doing that is explained here.

Cheers!

Jesse

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you, Jesse!

I now have a much better picture of this!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Dimitri,

I just wanted to update you that I’m making progress, but my goal to getting a working POC up and running this week was perhaps too bold.

In the meantime, could you elaborate on the specific use-case? What sort of data are you trying to push back to BigQuery?

Cheers,

Jesse

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@jesse_carah Whenever people construct a view which represents a segment, e.g. “all user_ids who did xyz and live in London”. I would like to enable them to save this list of user_ids as a segments so that it can be used in other dashboards/analysis. The only missing part is to be able to save the output of an explore into an existing BigQuery table. This way everyone would be able to create custom (arbitrary complicated) segments. Hope that makes sense!?

I think this a very common analytical pattern in general!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Dimitri_Masin – I got this working 🙂 . I’m going to make a post this afternoon describing how to get this up and running.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Dimitri_Masin check out the new post here. Let me know if you have any questions.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

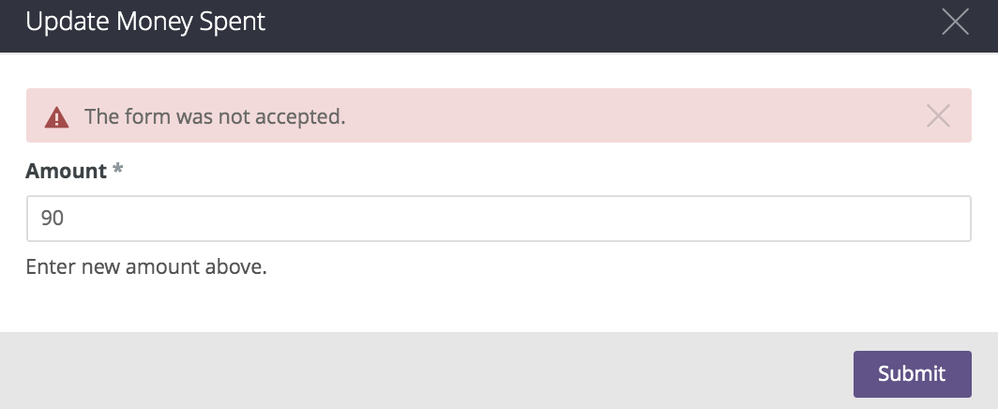

Hey @hwimoon let’s take your question here:

Hi Jesse,

I have been trying to follow your instructions on using Data Action literally step by step, and Looker keeps telling me that “the form was not accepted”. What does the error mean and how can I get around this?

Please let me know! Solving this would be phenomenal for me…!

Thank you,

Hwi Moon

I’m curious if you can see anything in the Cloud Function logs indicating whether the action completed correctly. My code snippet doesn’t do error handling all that well 😛. My guess is that the function is failing and returning some exit condition that Looker doesn’t understand.

Take a look at the logs and let me know what you see – you can access them directly in the cloud function console.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for your reply! I have just try to re-run and see if I get anything in the log, but there are no new entries. I think I messed up somewhere in LookML(URL) or configuration/permissions. But I think I followed everything 100% in your instructions… and very confused!

The error message is as shown…

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@hwimoon please share your LookML. You can redact anything sensitive if you’d like.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

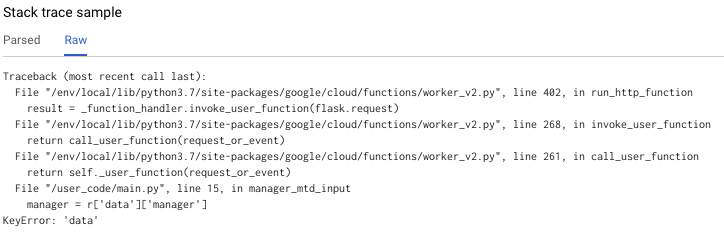

@jesse_carah Sorry for the late post - missed the notification for some reason.

I think there is an error in the cloud function (Python 3.7). It seems to me that my “r=request.get_json()” does not have any keys or values in it, causing errors when I do anything like r[‘data’] or r[‘form_params’].

I could not figure out why - please help!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@hwimoon Very weird! If you add in print(r) below the r = ... line, you’ll be able to observe what Looker’s sending over in the logs. For example, my function is parsing a dictionary that looks like this:

{'type': 'cell', 'scheduled_plan': None, 'attachment': None, 'data': {'value': 'Emma', 'rendered': 'Emma', 'name': 'Emma'}, 'form_params': {'annotation': 'Yes'}}

Give that a spin and let me know what you see. Also, go ahead and share the LookML for your action with the endpoint redacted.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It seems that the variable “r” is empty (i.e., {}). Very weird.

Here is my LookML code:

dimension: managers {

label: "Manager or BM"

type: string

sql: ${TABLE}.ManagerName ;;

action: {

label: "Test"

url: "https://us-west2-XXXXX.cloudfunctions.net/manager_mtd_input_test"

param: {

name: "manager"

value: "{{ value }}"

}

form_param: {

name: "performance"

type: string

label: "Enter Performance"

}

}

}- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I just checked out the link and it does seem like the data is flowing through. At this point, I would think the request is being rejected by the Cloud Function (GCP), which is causing the request to be empty. Do you think it could be some permission related problem?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

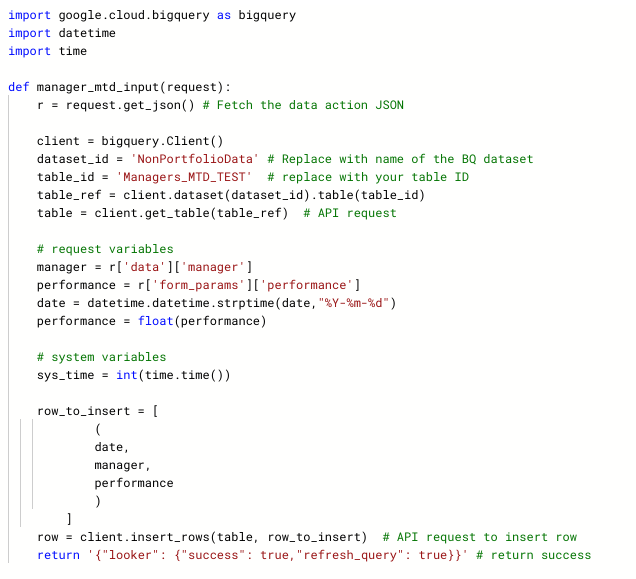

Here’s one possibility - Between the time the original article was written and now, GCP has changed some defaults for newly deployed Cloud Functions. You’ll want to make sure that you have opted-in to a publicly accessible function.

See here for more details: https://cloud.google.com/functions/docs/securing/managing-access-iam#allowing_unauthenticated_functi...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Fabio, thank you so much for your reply! I should have continued to share my progress. I was able to get my Cloud Function working by checking the checkbox “Allow unauthenticated invocations” as shown below when first creating the function.

Very excited that it is working now - however, the word “unauthenticated” concerns me a little bit. It seems that anybody with the trigger URL is able to write to our data, is that correct? If so, how do people safely manage and protect their database while using this “write-back” workflow? Please let me know. Thank you very much!!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Well, the situation is that GCP is not doing the authentication for you. It is expected that your code will handle any authentication. The Action framework includes a secret that is configured when you add the Action to Looker, and which you should use to authenticate that the request came from your Looker instance.

This article talks about setting up the secret when deploying the official ActionHub code, but the process is similar for a custom written Action

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Dimitri_Masincheck out the new post here. Let me know if you have any questions.

Hi, I have a need which seems similar to the one Dimitri described 2 years ago. Unfortunately the link provided by @jesse_carah is broken.

Is there any way to see Jesse’s post or should I start a new thread?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Krishna,

I’ve been able to write data from Looker to BigQuery using both Data Actions as well as the Looker Action Hub. In either case, you’ll need to push data from Looker to some middleware that will interpret the webhook from Looker and perform the necessary operations to then stream the data to BigQuery.

Luckily, Google has a great service called Google Cloud Functions that makes this really easy. Like AWS’s Lambda, Cloud Functions let you deploy code that gets executed based off of some event. With a data action, you can push JSON containing data from Looker as well as user-defined form parameters to a Cloud Function endpoint. The Cloud Function then parses the JSON, extracts the relevant values, and calls on the BigQuery SDK to stream the results to BigQuery.

Here’s a quick overview of how to use Cloud Functions to stream data from Looker to BigQuery. In this example, we’ll create a data action and cloud function that lets an end user persist an annotation to BigQuery:

Create the Data Action

In this example, we’re going to attach a data action to field, and allow end-users to mark whether or not a name is a cool name.

dimension: name {

type: string

sql: ${TABLE}.name ;;

action: {

label: "Cool Name?"

url: ""

param: {

name: "name"

value: "{{ value }}"

}

form_param: {

name: "annotation"

type: select

label: "Cool name?"

default: "No"

description: "Do you think that this name is a cool name?"

option: {

name: "No"

}

option: {

name: "Yes"

}

}

}

}

Note: We’re going to leave the url blank for now. Once we’ve spun up the cloud function we’ll paste the endpoint in.

Configure the Cloud Function

- Follow the first three steps here to select your GCP project and enable API access for Cloud Functions and BigQuery.

- Navigate to https://console.cloud.google.com/functions and ensure that you’ve selected the same project in which BigQuery resides.

- Click Create Function, and give it a name. Select a memory allocation (in my experience, you can select the minimum for this type of operation).

- Select HTTP as your trigger

- Select your preferred runtime (for this example, I will use Python 3.7, but versions of Node.js are also supported).

Creating your Cloud Function

We’re now going to write a simple Python function that writes the user selected annotation to BigQuery, and place it in main.py

import google.cloud.bigquery as bigquery

import datetime

import time

def annotation(request):

r = request.get_json() # Fetch the data action JSON

client = bigquery.Client()

dataset_id = '' # Replace with name of the BQ dataset

table_id = '' # replace with your table ID

table_ref = client.dataset(dataset_id).table(table_id)

table = client.get_table(table_ref) # API request

# request variables

name = r['data']['name']

annotation = r['form_params']['annotation']

# system variables

sys_time = int(time.time())

row_to_insert = [

(

name,

annotation,

datetime.datetime.fromtimestamp(sys_time).strftime('%Y-%m-%d %H:%M:%S')

)

]

row = client.insert_rows(table, row_to_insert) # API request to insert row

return '{"looker": {"success": true,"refresh_query": true}}' # return success response to Looker

Additional things to configure:

- The ‘Function to Execute’ is annotation

- Make sure to include a line for

google-cloud-bigquery==1.5.0inrequirements.txt - Click the ‘Trigger’ tab and copy the URL. Paste this in the action that you setup in the first step.

The End Result:

Caveats:

- Right now, this function is open to the internet, so anyone with your Cloud Function URL can write data to your BigQuery instance. Consider adding in some checks/validation that the request is coming from Looker.

- For a more comprehensive and secure approach, consider adapting this using the Action Hub framework. I can provide more detail on this if you’re interested.

Cheers!

Jesse

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Jeese,

Any code snippet to move system activity data into bigquery using cloud functions.

Thanks

Srinivasarao

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jesse_carah

I have tried the same method that you posted. But when I’m trying to update the field, it is appending as another row and updates the name column with the old value and the annotation column with the new value.

In the text payload, the updating value is in the data and the new value is in the annotation. can you help me with which part I have made mistake in?

{'type': 'cell', 'scheduled_plan': None, 'attachment': None, 'data': {'value': '1000', 'rendered': '1000', 'name': '1000'}, 'form_params': {'annotation': '"1800"'}} dimension: annotation {

type: string

sql: ${TABLE}.annotation ;;

action: {

label: "Update the Field"

url: "https://us-central1-decisive-triode-363515.cloudfunctions.net/function-1"

param: {

name: "name"

value: "{{ value }}"

}

form_param: {

name: "annotation"

type: string

label: "Update the Field"

# default: "No"

description: "Update the name field if there is any error while initial entry"

}

}

}

dimension: name {

type: string

sql: ${TABLE}.name ;;

action: {

label: "Update the Field"

url: "https://us-central1-decisive-triode-363515.cloudfunctions.net/function-1"

param: {

name: "name"

value: "{{ value }}"

}

form_param: {

name: "annotation"

type: string

label: "Update the Field"

# default: "No"

description: "Update the name field if there is any error while initial entry"

}

}

}

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Good question -- my example uses the streaming inserts API, which is append only. The code snippet that I shared publishes an ingestion timestamp for the annotation. You can use SQL to filter down on the most recent annotation -- e.g.,: https://stackoverflow.com/questions/25269410/google-big-query-sql-get-most-recent-column-value

Hope that helps.

-

access grant

6 -

actionhub

1 -

Actions

8 -

Admin

7 -

Analytics Block

27 -

API

25 -

Authentication

2 -

bestpractice

7 -

BigQuery

69 -

blocks

11 -

Bug

60 -

cache

7 -

case

12 -

Certification

2 -

chart

1 -

cohort

5 -

connection

14 -

connection database

4 -

content access

2 -

content-validator

5 -

count

5 -

custom dimension

5 -

custom field

11 -

custom measure

13 -

customdimension

8 -

Customizing LookML

116 -

Dashboards

144 -

Data

7 -

Data Sources

3 -

data tab

1 -

Database

13 -

datagroup

5 -

date-formatting

12 -

dates

16 -

derivedtable

51 -

develop

4 -

development

7 -

dialect

2 -

dimension

46 -

done

9 -

download

5 -

downloading

1 -

drilling

28 -

dynamic

17 -

embed

5 -

Errors

16 -

etl

2 -

explore

58 -

Explores

5 -

extends

17 -

Extensions

9 -

feature-requests

6 -

filter

220 -

formatting

13 -

git

19 -

googlesheets

2 -

graph

1 -

group by

7 -

Hiring

2 -

html

19 -

ide

1 -

imported project

8 -

Integrations

1 -

internal db

2 -

javascript

2 -

join

16 -

json

7 -

label

6 -

link

17 -

links

8 -

liquid

154 -

Looker Studio Pro

1 -

looker_sdk

1 -

LookerStudio

3 -

lookml

859 -

lookml dashboard

20 -

LookML Foundations

54 -

looks

33 -

manage projects

1 -

map

14 -

map_layer

6 -

Marketplace

2 -

measure

22 -

merge

7 -

model

7 -

modeling

26 -

multiple select

2 -

mysql

3 -

nativederivedtable

9 -

ndt

6 -

Optimizing Performance

29 -

parameter

70 -

pdt

35 -

performance

11 -

periodoverperiod

16 -

persistence

2 -

pivot

3 -

postgresql

2 -

Projects

7 -

python

2 -

Query

3 -

quickstart

5 -

ReactJS

1 -

redshift

10 -

release

18 -

rendering

3 -

Reporting

2 -

schedule

5 -

schedule delivery

1 -

sdk

5 -

singlevalue

1 -

snowflake

16 -

sql

221 -

system activity

3 -

table chart

1 -

tablecalcs

53 -

tests

7 -

time

8 -

time zone

4 -

totals

7 -

user access management

3 -

user-attributes

9 -

value_format

5 -

view

24 -

Views

5 -

visualizations

166 -

watch

1 -

webhook

1 -

日本語

3

- « Previous

- Next »

Twitter

Twitter