- Looker

- Looker Forums

- Modeling

- Symmetric Aggregates

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The Problem

A long standing limitation of SQL has been the asymmetry and complexity of its aggregate computations. In Looker, aggregate computations - like SUM(), AVERAGE() and COUNT() - are used to calculate measures.

SQL lets programmers join tables in arbitrarily complex ways, but when doing this, only a few of the aggregate computations work reliably.

The aggregate functions that work, regardless of how tables are joined, are called “symmetric aggregates.” They are:

COUNT(DISTINCT)

MAX()

MIN()

The functions that need to be used very carefully, to avoid incorrect results, are called “non-symmetric aggregates.” They are:

SUM()

AVERAGE()

COUNT()

The non-symmetric aggregates only work if two conditions are met:

- The table that they are used on is in the

FROMclause of the query, and not in a join - All the joins in the query have a many-to-one or one-to-one relationship

If either of these conditions are not met SUM(), AVERAGE() and COUNT() will be computed incorrectly. To see a simple example of this problem, consider the following tables:

orders

| id | order_date | user_id | shipping_amount |

|---|---|---|---|

| 1 | 2014-01-01 | 1 | 5.00 |

| 2 | 2014-01-02 | 2 | 6.00 |

order_items

| id | order_id | sku | amount |

|---|---|---|---|

| 1 | 1 | 1 | 23.00 |

| 2 | 1 | 2 | 12.00 |

| 3 | 2 | 2 | 12.00 |

Now assume we run the following query:

SELECT

SUM(order_items.amount) AS total_amount,

SUM(orders.shipping_amount) AS total_shipping

FROM order_items

LEFT JOIN orders ON orders.id = order_items.order_id

In this case the relationship between order_items and orders is many-to-one (not one-to-many or one-to-one). Consequently, some of the results will be bad. To understand why this is the case, consider the result of the query before the SUM() is applied:

| ORDER_ITEMS | ORDERS | ||||||

|---|---|---|---|---|---|---|---|

| id | order_id | sku | amount | id | order_date | user_id | shipping_amount |

| 1 | 1 | 1 | 23.00 | 1 | 2014-01-01 | 1 | 5.00 |

| 2 | 1 | 2 | 12.00 | 1 | 2014-01-01 | 1 | 5.00 |

| 3 | 2 | 2 | 12.00 | 2 | 2014-01-02 | 2 | 6.00 |

Now it’s easier to see that, when orders.shipping_amount is summed, it will provide an incorrect result. Since order number 1 is repeated twice in the results, the shipping amount for it is added in twice. SUM(orders.shipping_amount) seems like it should work, but it doesn’t.

On the other hand, the symmetric aggregates do work reliably. If we swap out our SUM() for COUNT(DISTINCT) in our query it computes correctly:

SELECT

COUNT(DISTINCT order_items.id) as order_items_count

, COUNT(DISTINCT orders.id) as orders_count

FROM order_items

LEFT JOIN orders ON orders.id = order_items.order_id

The worst part is, there’s no easy check to determine what the join relationships are, and therefore, if they’re going to cause problems. Although there are some tricks to determine the join relationship at the current time, it’s no guarantee that this will be the join relationship in the future. The only way to know for sure is to understand the underlying data and how it is being created. Brett Sauvé wrote a great post about this that explains how to work through all of this.

The Fix

LookML operates at an abstraction level above SQL (all LookML code is eventually converted to SQL). In LookML, you declare the join relationships (one-to-many, many-to-many, and one-to-one) as well as the join type (LEFT JOIN, FULL OUTER JOIN, etc). Additionally, LookML measures (which eventually become the aggregate computations we’ve been talking about) are declared in the view they are aggregating.

LookML measures are symmetric, no matter what. It doesn’t matter what the join structure is or if the table is in the FROM clause. This simplification makes asking questions much more direct, and takes away the complexity involved with certain queries. As long as you define your joins correctly, errors in calculations are eliminated.

Video Store Example

MySQL’s sample video store database (yes, a video store, shows you how old it is) actually presents some interesting analytical challenges. They can demonstrate the power of Looker’s aggregate computation abilities.

In the example database, copies of a film are purchased and put in inventory. At some later date a customer rents a copy from inventory, which is recorded as a rental. When the rental is returned the customer makes a payment. Films have one or more categories, which are looked up in film_category, and stored in category. Similarly, films have one or more actors, which are looked up in film_actor, and stored in actor.

Visually, all the join relationships look like this:

film_category-----film-----film_actor

| | |

| | |

category inventory actor

|

|

rental

/ \

/ \

customer-----payment

In prior versions of LookML it was important to try and avoid one-to-many joins. One of the paths we might have used to join as many things together as possible, while avoiding one-to-many joins, would be to start with payment and join as follows:

payment

↖ rental

↖ customer

↖ inventory

↖ film

As just one example of the complicated considerations involved: note that we’re joining customer to payment through the rental, instead of directly, because if we did go directly, it would be a one-to-many join.

These types of things would be easy to compute when exploring from payment:

- Payments by month, week, day of week

- Top performing films

- Top customers

These things would not be so easy to compute:

- Inventory items that haven’t been rented

- Duration to pay back an inventory item once its been rented

- Revenue of rentals by category or actor

Joining the New Way

In our new model, we’ll start exploring from inventory instead of payment. The joins will look like:

inventory

↖ film

↖ film_category

↖ category

↖ film_actor

↖ actor

↖ rental

↖ customer

↖ payment

In previous versions of Looker, this would have been a bad thing to do. For example, inventory to film_category is a one-to-many relationship. Now, however, this is perfectly fine.

This is how the LookML is written:

- connection: video_store

- scoping: true

- include: "sakila.view.lookml"

- explore: inventory

joins:

- join: film

foreign_key: inventory.film_id

# Fanout!

- join: film_category

sql_on: ${film.film_id} = ${film_category.film_id}

relationship: one_to_many

- join: category

foreign_key: film_category.category_id

# Fanout!

- join: film_actor

sql_on: ${film.film_id} = ${film_actor.film_id}

relationship: one_to_many

- join: actor

foreign_key: film_actor.actor_id

- join: rental

sql_on: ${inventory.inventory_id} = ${rental.inventory_id}

relationship: one_to_many

- join: customer

foreign_key: rental.customer_id

- join: payment

sql_on: ${payment.rental_id} = ${rental.rental_id}

relationship: one_to_many

Add a Few Measures (a.k.a. Aggregate Computations)

To the payment view, we’ll add the measure:

- measure: total_amount

type: sum

value_format_name: decimal_2

sql: ${amount}

And to the inventory view, we’ll add this measure. The replacement cost isn’t an attribute of inventory, but rather an attribute of film.

- measure: total_replacement_cost

type: sum

value_format_name: decimal_2

sql: ${film.replacement_cost}

A Simple Business Overview: Computing Return on Investment

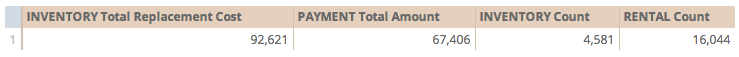

Looking at costs vs. revenue is the key to any business. Costs in this case come in the form of inventory. Revenue comes in the form of payments on rentals. Using the measures we just created, we can compute these sums. From the data below, we can see that we’ve spent about $93K on inventory, and over time, have been paid back $67K.

In raw SQL it wouldn’t be possible to compute a sum in both the inventory view and payment view at the same time. It would require two separate queries (or sub queries). This would add complexity and we wouldn’t have the ability to drill.

Build a Compound Measure: Percent Payback

We can combine the two measures we just created to see how close we are to breaking even:

- measure: percent_payback

type: number

value_format_name: decimal_2

sql: 100.0 * ${payment.total_amount} / ${total_replacement_cost}

How Long Does It Take to be Paid Back?

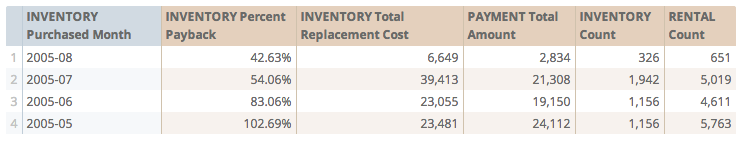

To get an idea of how payback changes over time, we can group by month, and display our new Percent Payback measure. It looks like payback is about 4 months.

Does Payback Vary by Category?

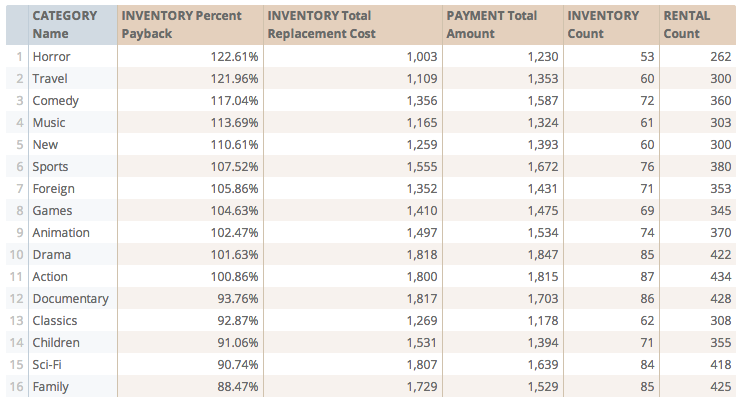

If we limit ourselves to May 2005, where we achieved payback overall, we can determine which categories do the best:

Top 10 Selling Actors and Their Genres

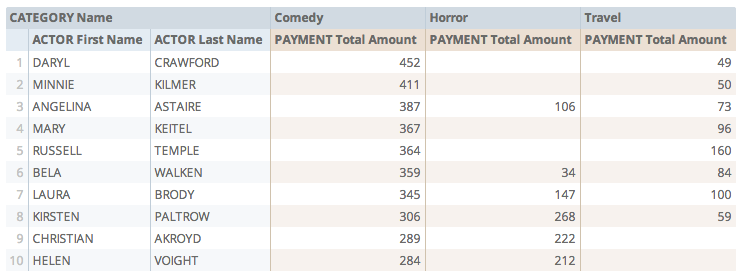

We can determine which actors returned the most money to us, and from which categories the money is coming (we’ve limited to the top 3 categories just to make the table easier to read) .

Note: It’s still important to think about what this data is telling us. Since each inventory item can have multiple categories and actors, the money from a single payment might show up more than once, for each actor and category associated with that payment. If you think about it, this makes sense. If we wanted to avoid this repeated data, we’d somehow have to split each payment amongst all the different categories and actors associated with it. We haven’t explained to Looker how it should do that.

So, even though we’ve avoided the classic SQL problem where certain types of joins create bad sums, you can still segment the data such that information is repeated. In this example, the data is still meaningful and correct in some sense. However, you wouldn’t be able to add up these numbers and arrive at the total amount of money the video store has made.

Try It at Home

The above computations would be very difficult to write in standard SQL. LookML makes the difficult easy.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I just tried this for the first time today and nearly brought down the system!

Here’s the query it generated:

SELECT

event_group.id AS `event_group.id`,

event_group.NAME AS `event_group.name`,

(

SUM(DISTINCT (

CAST(FLOOR(COALESCE(event_group_views.views, 0) * (1000000 * 1.0

)) AS DECIMAL(65, 0))

) + (

CAST(CONV(SUBSTR(MD5(event_group_views.event_group_id), 1, 16), 16,

10) AS DECIMAL(65)) * 18446744073709551616 + CAST(CONV(SUBSTR(

MD5(event_group_views.event_group_id), 17, 16), 16, 10)

AS DECIMAL(65))

)) - SUM(DISTINCT (

CAST(CONV(SUBSTR(MD5(event_group_views.event_group_id), 1, 16), 16,

10) AS DECIMAL(65)) * 18446744073709551616 + CAST(CONV(SUBSTR(

MD5(event_group_views.event_group_id), 17, 16), 16, 10)

AS DECIMAL(65))

))

) / (1000000 * 1.0) AS `event_group_views.views`

FROM looker_scratch.LKR$5N247Q03VGF1D3IA90D_performance_facts AS performance

LEFT JOIN analytics.model_event_group AS event_group

ON performance.event_group_id = event_group.id

LEFT JOIN looker_scratch.event_group_views AS event_group_views

ON performance.event_group_id = event_group_views.event_group_id

GROUP BY 1,

2

ORDER BY (

SUM(DISTINCT (

CAST(FLOOR(COALESCE(event_group_views.views, 0) * (1000000 * 1.0

)) AS DECIMAL(65, 0))

) + (

CAST(CONV(SUBSTR(MD5(event_group_views.event_group_id), 1, 16), 16,

10) AS DECIMAL(65)) * 18446744073709551616 + CAST(CONV(SUBSTR(

MD5(event_group_views.event_group_id), 17, 16), 16, 10)

AS DECIMAL(65))

)) - SUM(DISTINCT (

CAST(CONV(SUBSTR(MD5(event_group_views.event_group_id), 1, 16), 16,

10) AS DECIMAL(65)) * 18446744073709551616 + CAST(CONV(SUBSTR(

MD5(event_group_views.event_group_id), 17, 16), 16, 10)

AS DECIMAL(65))

))

) / (1000000 * 1.0) DESC LIMIT 500

As you can imagine this made me quite unpopular with the database admin guys. I think symmetric joins are a great idea but I’m wondering if they are just too inefficient in practice. I’ve been forced to turn them off for the time being - any thoughts?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry you had problems. Symmetric aggregates themselves aren’t much more expensive then count distincts (on the order of 2X).

Your problem probably relates to how heavily fanned out the joins are. My guess is that joining event_group_views is causing major fanout and the query would be very expensive no matter what you computed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It’s a table of ~1m rows so that may well be true, it’s a shame though as I think we’ll have to avoid this feature for the time being. I’m to give Redshift a trial soon to see if that helps.

I do wonder (just thinking out loud, I’m not an expert) why the need to calculate everything in one single (admittedly genius) query when presumably Looker could just build the result set by combining several select statements into a display grid. i.e. in my case query the database for event_group_views and then just put result in the last column, rather than using the magic of MD5 hashing etc.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There are lots and lots of good new design patterns. Being able to compute sums from different entities simultaneously is just one. In this case you retain the ability to drill. It lets you easily build ratios and explore them. Above we have revenue per actor, but we could do %payback by actor without having to retool queries.

Symmetric Aggregates really reduces the number of explores in a model ( @vlad1 from Donor’s Choose explains that really nicely at a Look and Tell Event http://www.looker.com/video/look-and-tell-new-york-donorschoose). Donor’s choose went from 15 to 5.

Another benefit In the example above, you can join in attributes without fear of destroying calculations (in the above example, we can look at movie revenue by actor, something that would be very difficult to compute). Suppose you were a car company and cars had many options. You could look at your auto revenue, cost of build out, by options (and sales person). This has traditionally been a very hard problem.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Lloyd,

So, we’re quite excited about the opportunities that Symmetric Aggregates (SAs, for short) open up and have built out a bunch of new explores that rely on them. That said, there’s no question that they impose performance costs. Redshift’s distinct functions are pretty slow, and so the more we can avoid using SAs, the happier we are.

We have some questions about the logic behind when Looker decides it needs an SA and when it can just proceed with a straight aggregate function, because the behavior we’re seeing is somewhat unpredictable (at least to us) and seems, at times, inefficient.

As an example, let’s say that ORDERS is our base table and ORDER_ITEMS is left-joined to it and that they have a one-to-many relationship. As you said above, if you do the join and then do SUM(ORDERS.shipping_amount), your result will be wrong without SAs. However, the result for SUM(ORDER_ITEMS.amount) should run fine without requiring an SA.

As far as we can tell, in fact, any aggregate function that’s run exclusively on the right-hand, “many” table in a one-to-many join should work fine without requiring an SA. The fanning out that occurs is of the left-hand, “one” table, and so aggregate functions operating on that table would definitely require an SA to be correct.

Yet, at least in our setup, Looker is triggering the SAs, even when we’re exclusively calculating aggregate measures on the right-hand table. Because this imposes significant performance costs, we’d love to figure out if we’re setting something up wrong in a way that makes it impossible for Looker to determine that SAs aren’t actually needed. Or, alternatively, if Looker’s logic around when to trigger SAs can be improved so that it avoids them in this case unless they’re absolutely necessary.

Thanks

-Daniel

P.S. There’s also some weirdness around how SAs trigger based on whether a measure is of type: number or type: sum. We’ve got one measure that is of type: number and is defined as SUM(a)/SUM(b) where SAs do not trigger (even though measures of type: sum in the same query do trigger SAs). And then another measure that’s of type: number, but is defined with substitutions as ${field1}/${field2} (where both field1 and field2 are of type: sum) that do trigger SAs. So yeah, we’re confused.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Wow, Daniel, you are brilliant.

On Symmetric Aggregates

You are right, the way looker currently works is that it sees a one_to_many join and says “all aggregates are symmetric”. It just didn’t occur to me that we might be able to use normal aggregates in the leaf nodes. It’s something we’ll investigate. There may be edge cases I can’t imagine at the moment so I’m holding off on any promises.

On type: number vs. type: sum

If you declare a measure

- measure: my_sum

type: number

sql: SUM(orders.shipping_amount)

Looker doesn’t know this is a sum and can’t convert it to a symmetric aggregate.

If you declare

- measure: my_sum2

type: sum

sql: orders.shipping_amount

Looker will generate the generate SUM or as symmetric sum depending on the join structure of the query. When you use the ${my_sum2} the sql subtituted will be based on the join sturcture of the query. operator.

${…} simply substitutes the SQL for the field you are referencing.

Does this help?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Brilliant is an overstatement, but I appreciate it 😄

So yeah, the obvious case where this doesn’t work is when the leaf node stops being a leaf node and becomes a branch (because another table is one-to-many joined to it). And any many-to-many join is going to necessitate symmetric aggregates on both sides. But as long as you’re dealing with a pure one-to-many join (or joins), I haven’t been able to come up with any edge cases where you couldn’t just use plain aggregates on the rightmost table.

I’ll keep thinking on it, though, and let you know if I come up with any weirdness.

And on the type question, yup that makes sense. Hadn’t fully considered it from Looker’s perspective, but that totally makes sense as to why it would understand what’s happening in some cases but not others.

Let me know where you land on the new logic!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, I believe the new syntax for decimal is value_format_name: decimal_2

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Yuriy, the article has been updated.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Lloyd,

I’ve encountered severe performance issues with this functionality as well.

As I understand it, Symmetric Aggregates work as such. Given two or more fact tables:

- Hash all measure-dimension groupings.

- Join all tables together on common dimension(s).

- De-duplicate the measure-dimension groupings.

- Sum the measures and group by the dimension(s).

While steps 1 and 3 may take only twice as long as a normal DISTINCT calculation (as you said), step 2 cripples the database (in my case Vertica) and does not scale.

One way of solving this problem is to employ a method called drilling across, which requires conforming your data to a Star Schema. Quick explanation, given two or more fact tables:

- Sum each measure and group by the common dimension(s) in separate subqueries.

- FULL OUTER JOIN all tables to each other on common dimensions.

One of Looker’s advantages is that it enable companies with fewer data-modeling resources to do complex analysis. However, the lack of a drill across feature has been crippling to my team. Our tables are simply too big to join fact tables to each other directly.

-

access grant

6 -

actionhub

1 -

Actions

8 -

Admin

7 -

Analytics Block

27 -

API

25 -

Authentication

2 -

bestpractice

7 -

BigQuery

69 -

blocks

11 -

Bug

60 -

cache

7 -

case

12 -

Certification

2 -

chart

1 -

cohort

5 -

connection

14 -

connection database

4 -

content access

2 -

content-validator

5 -

count

5 -

custom dimension

5 -

custom field

11 -

custom measure

13 -

customdimension

8 -

Customizing LookML

116 -

Dashboards

144 -

Data

7 -

Data Sources

3 -

data tab

1 -

Database

13 -

datagroup

5 -

date-formatting

12 -

dates

16 -

derivedtable

51 -

develop

4 -

development

7 -

dialect

2 -

dimension

46 -

done

9 -

download

5 -

downloading

1 -

drilling

28 -

dynamic

17 -

embed

5 -

Errors

16 -

etl

2 -

explore

58 -

Explores

5 -

extends

17 -

Extensions

9 -

feature-requests

6 -

filter

220 -

formatting

13 -

git

19 -

googlesheets

2 -

graph

1 -

group by

7 -

Hiring

2 -

html

19 -

ide

1 -

imported project

8 -

Integrations

1 -

internal db

2 -

javascript

2 -

join

16 -

json

7 -

label

6 -

link

17 -

links

8 -

liquid

154 -

Looker Studio Pro

1 -

looker_sdk

1 -

LookerStudio

3 -

lookml

859 -

lookml dashboard

20 -

LookML Foundations

54 -

looks

33 -

manage projects

1 -

map

14 -

map_layer

6 -

Marketplace

2 -

measure

22 -

merge

7 -

model

7 -

modeling

26 -

multiple select

2 -

mysql

3 -

nativederivedtable

9 -

ndt

6 -

Optimizing Performance

29 -

parameter

70 -

pdt

35 -

performance

11 -

periodoverperiod

16 -

persistence

2 -

pivot

3 -

postgresql

2 -

Projects

7 -

python

2 -

Query

3 -

quickstart

5 -

ReactJS

1 -

redshift

10 -

release

18 -

rendering

3 -

Reporting

2 -

schedule

5 -

schedule delivery

1 -

sdk

5 -

singlevalue

1 -

snowflake

16 -

sql

221 -

system activity

3 -

table chart

1 -

tablecalcs

53 -

tests

7 -

time

8 -

time zone

4 -

totals

7 -

user access management

3 -

user-attributes

9 -

value_format

5 -

view

24 -

Views

5 -

visualizations

166 -

watch

1 -

webhook

1 -

日本語

3

- « Previous

- Next »

Twitter

Twitter