- Looker

- Looker Forums

- Modeling

- Managing Schema Changes

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The databases you connect to with Looker are not frozen in time - as businesses grow and evolve the way we store data changes too. LookML provides an abstraction layer to help manage these changes and minimize their impact on end-user reports. In this article we’ll discuss some Looker features, common workflows, and tips & tricks to help keep the Looker model up-to-date with underlying schema changes.

We’re going to divide schema changes into two types: small and big. Small changes are the day-to-day, incremental tweaks such as add/remove/rename columns, split/combine/rename tables, etc. Big changes are when you drastically refactor the schema, such as for a new product version or a platform migration.

In both of these cases, good communication between internal teams and adherence to a change management process is the most important factor for ensuring a smooth and successful outcome. LookML code should be treated just like your own production code for services that are dependent on the backend database.

Small Schema Changes

Looker has 3 particular features every analyst should know about to help deal with schema changes and content migration.

The Substitution Operator

We see the familiar ${ } in our LookML code all the time, but let’s take a second to reflect on why that is. The substitution operator gives us indirection and encapsulation - your logic is defined only in one place, then that definition is referenced elsewhere. When you need to make a change later, there is only one place to do it, which automatically flows through to the other references. We also call this kind of code DRY for “Don’t Repeat Yourself”.

Dimensions in Looker naturally abstract your underlying table names. Take this code for example:

dimension: order_amount {

type: number

sql: ${TABLE}.order_amt

}

dimension: is_big_order {

type: yesno

sql: ${order_amount} > 1000

}

measure: total_order_amount {

type: sum

sql: ${order_amount}

}

measure: count_big_orders {

type: count

filters: {

field: is_big_order

value: yes

}

}

We could just use ${TABLE}.order_amt in “is_big_order” and “total_order_amount”. But if we later change the underlying column name to order_amount_usd, we’d then have to change it in 3 places. By using dimension references we only have to make the change once. This code also shows an example of encapsulating derived logic with “is_big_order”. Once we’ve defined the logic for big orders we can easily reference it in a filtered measure. If we later change the definition of “big”, the change only needs to be made in one place.

Using dimension references to abstract column names and derived logic makes it easy to respond to several small schema changes:

- Rename column - just update the column name in the base dimension definition

- Change column type - update the lookml field type, or apply a conversion in the base definition if needed. This can apply to timezone conversions as well.

- Delete column - remove the base dimenions definition, then the lookml validator can tell you if it was being referenced elsewhere. If you use raw sql references, you would need to search for all of them manually.

The alias Parameter

In the previous section we updated SQL references but didn’t rename or remove any LookML objects (eg dimensions, measures, views). But sometimes you will need to refactor the LookML objects, which can have the side effect of breaking users’ saved looks. The reason is because looks & dashboards are stored as a “query snapshot” in the Looker backend db - they don’t automatically know when the underlying model changes. Here is a diagram to illustrate:

When this look runs it will try to find view1.field1 in the LookML model. That field existed when the look was first saved, but could have been deleted by an analyst later. Then when the look runs the user will see a warning that view1.field1 no longer exists and will be omitted from the query.

The alias param allows us to re-route that reference to a different field instead:

dimension: new_better_field {

alias: [field1, other_view.field2]

}

The look is still saved with field1, but Looker can map that to a replacement field so the user doesn’t see an error. Note that a field can have multiple aliases, and you can alias fields from other views. This makes alias handy for the following situations:

- Deleting redundant fields and mapping the duplicates to the single source of truth

- Merging fields from one view into another, or splitting a view into sub-units (e.g. because you normalized or de-normalized the underlying database tables)

- Renaming a lookml field for code quality (you can always use the

labelparam to change just what users see)

Check out the docs for more info on the alias param.

The Content Validator - Bulk Find & Replace

Going back to the saved look diagram above, the substitution operator and alias param only effect the LookML side of the equation. The Content Validator, on the other hand, allows us to update the actual saved look in the backend db.

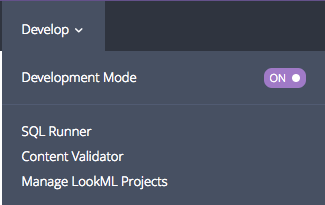

You can find the Content Validator in the Develop drop down menu:

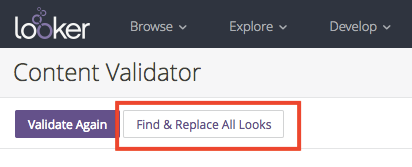

Once you click, it might take a few minutes to run. You’ll see a list of looks that are currently broken with options to fix them. However, take note of the buttons in the top left:

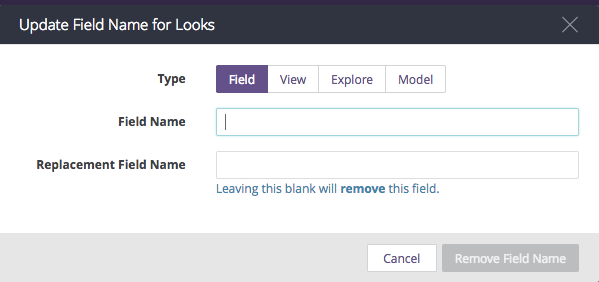

With “Find & Replace All Looks” you can bulk update all of your looks at once, without going through the individual errors. You can update either the field, view, explore, or model name:

Use this tool in the following situations:

- Moving an explore from one model to another

- Merging one explore into another

- Renaming a view or fields when

aliaswould be too burdensome - When you were previously using

aliasbut want to “push” that change out to the saved looks - When you want to remove a field and delete it from all saved looks (not redirect to a replacement)

Big Schema Changes

Sometimes schema changes are a big project and making the updates transparent to end users just isn’t possible (new product version, platform migration, or even switching data warehouse vendor). In these cases you may want to rebuild all or part of the LookML model. Here are some points to keep in mind:

- Looker “projects” just correspond to different git repos. You can start a new project if you want, but it’s not necessary.

- A Looker project can contain multiple model files, and each model file can point to a different database.

- You can always update the database connection for a model file at a later time.

So, let’s say your engineering team is developing a new schema on separate database called STAGING. You can create a new connection for this db in Looker, then create a new model file in your existing project and point it to the STAGING connection. From there you can start adding/copying views as needed to replicate the logic of your old db.

Once the new model is ready, you can use the Content Validator to update the model for all saved looks.

Or, you may be able to completely overwrite the old model with your new code-- just update the connection param for the model.

The API can also be helpful for bulk updating saved looks based on custom rules.

Feel free to reach out to Looker Support or your Customer Success representative for help planning big schema changes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is a very old post. Is there perhaps any new best practices or guides for this?

What tools does Looked provide to continuously check for changes in the database and the impact of that on the model? Since this is all GIT based it should be very possible to create a good experience here?

-

access grant

6 -

actionhub

1 -

Actions

8 -

Admin

7 -

Analytics Block

25 -

API

25 -

Authentication

2 -

bestpractice

7 -

BigQuery

69 -

blocks

11 -

Bug

60 -

cache

7 -

case

12 -

Certification

2 -

chart

1 -

cohort

5 -

connection

14 -

connection database

4 -

content access

2 -

content-validator

5 -

count

5 -

custom dimension

5 -

custom field

11 -

custom measure

13 -

customdimension

8 -

Customizing LookML

114 -

Dashboards

144 -

Data

7 -

Data Sources

3 -

data tab

1 -

Database

13 -

datagroup

5 -

date-formatting

12 -

dates

16 -

derivedtable

51 -

develop

4 -

development

7 -

dialect

2 -

dimension

46 -

done

9 -

download

5 -

downloading

1 -

drilling

28 -

dynamic

17 -

embed

5 -

Errors

16 -

etl

2 -

explore

58 -

Explores

5 -

extends

17 -

Extensions

9 -

feature-requests

6 -

filter

220 -

formatting

13 -

git

19 -

googlesheets

2 -

graph

1 -

group by

7 -

Hiring

2 -

html

19 -

ide

1 -

imported project

8 -

Integrations

1 -

internal db

2 -

javascript

2 -

join

16 -

json

7 -

label

6 -

link

17 -

links

8 -

liquid

154 -

Looker Studio Pro

1 -

looker_sdk

1 -

LookerStudio

3 -

lookml

859 -

lookml dashboard

20 -

LookML Foundations

52 -

looks

33 -

manage projects

1 -

map

14 -

map_layer

6 -

Marketplace

2 -

measure

22 -

merge

7 -

model

7 -

modeling

26 -

multiple select

2 -

mysql

3 -

nativederivedtable

9 -

ndt

6 -

Optimizing Performance

28 -

parameter

70 -

pdt

35 -

performance

11 -

periodoverperiod

16 -

persistence

2 -

pivot

3 -

postgresql

2 -

Projects

7 -

python

2 -

Query

3 -

quickstart

5 -

ReactJS

1 -

redshift

10 -

release

18 -

rendering

3 -

Reporting

2 -

schedule

5 -

schedule delivery

1 -

sdk

5 -

singlevalue

1 -

snowflake

16 -

sql

219 -

system activity

3 -

table chart

1 -

tablecalcs

53 -

tests

7 -

time

8 -

time zone

4 -

totals

7 -

user access management

3 -

user-attributes

9 -

value_format

5 -

view

24 -

Views

5 -

visualizations

166 -

watch

1 -

webhook

1 -

日本語

3

- « Previous

- Next »

Twitter

Twitter