- Looker

- Looker Forums

- Modeling

- Defining JSON Objects as LookML Dimensions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When you come across JSON objects in Postgres and Snowflake, the obvious thing to do is to use a JSON parsing function to select JSON keys as LookML dimensions. You’ll be able to create a lot of dimensions without any issues, but there are some nuances to note. This article will outline the process of defining dimensions and iron out some issues you may encounter in the process.

Step 1: Select the raw JSON dimension to see what key-value pairs are included

Say there is a dimension defined as the following:

Postgres or Snowflake:

- dimension: test_json_object

sql: ${TABLE}.test_json_object

If you select this dimension in an explore, you’ll get something like this:

{"key1":"abc","key2":"a44g6jX3","key3":"12345","key4":"2015-01-01 12:33:24"}

Now we know that our new dimensions are keys 1~4, with various data types ranging from string values to a timestamp.

Step 2: Manually define each key using a JSON parsing function or a single colon (’:’)

To define key2 in the above-described example, you would write the following:

Postgres:

- dimension: key2

sql: json_extract_path(${TABLE}.test_json_object, 'key2')

Snowflake:

- dimension: key2

sql: ${TABLE}.test_json_object:key2

This will return a string value by default, still in quotes:

"a44g6jX3"

Step 3: Cast data types for each dimension

Now that we have a baseline list of dimensions defined, we’ll explicitly cast these dimensions as appropriate data types in the SQL parameter:

Postgres:

- dimension: key2

sql: CAST(JSON_EXTRACT_PATH(${TABLE}.test_json_object, 'key2') AS string)

Snowflake:

- dimension: key2

sql: ${TABLE}.test_json_object:key2::string

This will now result in quotes being removed:

a44g6jX3

Simply declaring a LookML dimension type (string, number, etc.) may NOT remove the quotes (specifically in Snowflake). Even worse, if you have an integer dimension defined as the following (type declared, but not explicitly casted)…

Snowflake:

- dimension: key3

type: number

sql: ${TABLE}.test_json_object:key3

… you risk obtaining nulls for that dimension.

As such, explicitly casting data types at this stage is crucial.

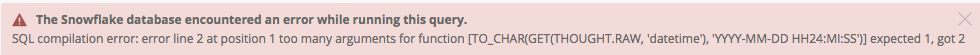

Step 4: Make sure timestamps come through correctly

This is an extension of step 3. LookML offers native timestamp conversion with dimension_group and type: time declaration:

Postgres and Snowflake:

- dimension_group: test

type: time

timeframes: []

While this might work for dates in Snowflake, you will most likely see errors cropping up when you try to select Time, Month, Year, etc.

Instead of assuming accurate timestamp handling by Looker, you should be explicitly casting your newly-defined dimensions, in this case as the following:

Postgres:

- dimension_group: new_time

type: time

timeframes: [time, date, week, month, year]

sql: CAST(JSON_EXTRACT_PATH(json_extract_path(${TABLE}.test_json_object, 'key4') AS timestamp)

Snowflake:

- dimension_group: new_time

type: time

timeframes: [time, date, week, month, year]

sql: ${TABLE}.test_json_object:key4::timestamp

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When attempting to work with json columns in looker I can’t get past this error:

ERROR: could not identify an ordering operator for type json Hint: Use an explicit ordering operator or modify the query. Position: 145

Any clues?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hmm, I haven’t seen that error before, but it would be great if we could gather more information. Would you be able to send LookML definitions of the problem fields to shohei@looker.com?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Comment removed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In order to avoid ERROR: could not identify an ordering operator for type json Hint: Use an explicit ordering operator or modify the query, you should cast each column in your derived table SQL statement like the following:

SELECT

name,

details::varchar,

json_extract_path(details, 'id')::varchar as id,

json_extract_path(details, 'payout_rate')::varchar as payout_rate,

json_extract_path(details, 'state')::varchar as state,

json_extract_path(details, 'active')::varchar as active

FROM [some table here]

The error crops up from some databases not being able to group by or order by JSON objects. The solution to this is to explicitly cast any JSON-derived column. I suggest using VARCHAR by default as FLOAT may not work due to casting rules.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dynamically Unnesting JSON

One thing to note is that these patterns can use be used within Amazon Redshift. Sometime when ETLing data from specific sources, this may leave you with nested JSON columns. This is fairly easy to deal with when the cardinally between the a row in a table and nested column is one to one, as in the situations described above.

This becomes much complex in the senario of one row in the table correlating with an array of many JSON objects. Imagine a column like so:

[{"_id":"1079","orderId":"105","type":"product"},{"_id":"1080","orderId":"105","type":"product"}]

You can see here for one column we have 2 JSON objects. There is a creative way to unnest these fields up to an N number of objects using a simple cross join.

Will start by creating a numbers table which you may already have or have seen before. This table below calculates 1-256.

WITH numbers as (SELECT

p0.n

+ p1.n*2

+ p2.n * POWER(2,2)

+ p3.n * POWER(2,3)

+ p4.n * POWER(2,4)

+ p5.n * POWER(2,5)

+ p6.n * POWER(2,6)

+ p7.n * POWER(2,7)

as num

FROM

(SELECT 0 as n UNION SELECT 1) p0,

(SELECT 0 as n UNION SELECT 1) p1,

(SELECT 0 as n UNION SELECT 1) p2,

(SELECT 0 as n UNION SELECT 1) p3,

(SELECT 0 as n UNION SELECT 1) p4,

(SELECT 0 as n UNION SELECT 1) p5,

(SELECT 0 as n UNION SELECT 1) p6,

(SELECT 0 as n UNION SELECT 1) p7)

SELECT _id as order_id

, n.num

, json_extract_path_text(json_extract_array_element_text(items, n.num::int), '_id')::varchar(100) as order_item_id

, json_extract_path_text(json_extract_array_element_text(items, n.num::int), 'orderId')::varchar(100) as order_id_1

, json_extract_path_text(json_extract_array_element_text(items, n.num::int), 'type')::varchar(100) as "type"

FROM schema.table

, numbers n

WHERE 1=1

AND num <= json_array_length(items) -1

GROUP BY 1, 2, 3, 4, 5

ORDER BY 1

This pattern will allow us to unnest up to 256 items from a JSON Array. If we need to unnest more all we need to do is add more number to our numbers table! You can also see in the where clause we use: num <= json_array_length(items) -1 this ensures that we are only joining a row as for as many objects are contained in that rows array.

We can store result of this query as a PDT so that looker is not building this table on the fly every time!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Parsing an array of JSON objects in BigQuery

Consider a column ‘Temperature Alerts’ like:

[{'description': 'more than 5.0 °C for 12 times in row', 'flash': 'R ', 'count': 12, 'label': 'T3', 'temperature': 5.0, 'in_row': true, 'above': true}]

As above we follow steps 1 through 3 with a slight variation. To avoid the error Failed to retrieve data - JSONPath must start with '$' we must specify the start path and index position to parse there after like so:

$[0].string_to_parse

dimension: temp_alerts_description{

sql: CAST(JSON_EXTRACT(${TABLE}.temperature_alerts, '$[0].description')AS STRING) ;;

group_label: "Temperature Alerts"

label: "Description"

}

dimension: temp_alerts_flash {

sql: CAST(JSON_EXTRACT(${TABLE}.temperature_alerts, '$[0].flash')AS STRING) ;;

group_label: "Temperature Alerts"

label: "Flash"

}

etc....

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In a Snowflake environment I would strongly suggest using an error-handling conversion function with a string expression rather than a CAST from a variant value. This will fail gracefully with a NULL if your schema changes (happens all the time with JSON) rather than a SQL error.

The sample code for key3 (an integer) in the document described above would basically look like this:

sql: TRY_TO_NUMBER(${TABLE}.test_json_object:key3::string))

And for key4 (a timestamp):

sql: TRY_TO_TIMESTAMP(${TABLE}.test_json_object:key4::string))

The documentation is here: https://docs.snowflake.net/manuals/sql-reference/functions-conversion.html#label-try-conversion-func...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@shohei what do you do when your JSON object is written differently? For example.

{"custom": [ { "name": "addressIdNum", "valueNum": 12345678} }

Here my key is addressidNum and my value is 12345678.

Thanks for all your help!

Best,

Preet Rajdeo

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Preet,

This depends on the dialect you’re using, but you can usually do this by stringing together parent keys until you get to your key of choice.

Using your example, you might do this:

Snowflake: JSON_EXTRACT_PATH(JSON_EXTRACT_PATH(${field}, 'custom'),'name')

OR

BigQuery: JSON_EXTRACT(${field}, '$.custom.name')

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @bernard.kavanagh

how can we do that in snowflake?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What works for me with Postgres:

dimension: replacement_name {

type: string

sql: (${TABLE}.replacements-> 0 ->> 'name')::text;;

}

-

access grant

6 -

actionhub

1 -

Actions

8 -

Admin

7 -

Analytics Block

27 -

API

25 -

Authentication

2 -

bestpractice

7 -

BigQuery

69 -

blocks

11 -

Bug

60 -

cache

7 -

case

12 -

Certification

2 -

chart

1 -

cohort

5 -

connection

14 -

connection database

4 -

content access

2 -

content-validator

5 -

count

5 -

custom dimension

5 -

custom field

11 -

custom measure

13 -

customdimension

8 -

Customizing LookML

116 -

Dashboards

144 -

Data

7 -

Data Sources

3 -

data tab

1 -

Database

13 -

datagroup

5 -

date-formatting

12 -

dates

16 -

derivedtable

51 -

develop

4 -

development

7 -

dialect

2 -

dimension

46 -

done

9 -

download

5 -

downloading

1 -

drilling

28 -

dynamic

17 -

embed

5 -

Errors

16 -

etl

2 -

explore

58 -

Explores

5 -

extends

17 -

Extensions

9 -

feature-requests

6 -

filter

220 -

formatting

13 -

git

19 -

googlesheets

2 -

graph

1 -

group by

7 -

Hiring

2 -

html

19 -

ide

1 -

imported project

8 -

Integrations

1 -

internal db

2 -

javascript

2 -

join

16 -

json

7 -

label

6 -

link

17 -

links

8 -

liquid

154 -

Looker Studio Pro

1 -

looker_sdk

1 -

LookerStudio

3 -

lookml

859 -

lookml dashboard

20 -

LookML Foundations

54 -

looks

33 -

manage projects

1 -

map

14 -

map_layer

6 -

Marketplace

2 -

measure

22 -

merge

7 -

model

7 -

modeling

26 -

multiple select

2 -

mysql

3 -

nativederivedtable

9 -

ndt

6 -

Optimizing Performance

29 -

parameter

70 -

pdt

35 -

performance

11 -

periodoverperiod

16 -

persistence

2 -

pivot

3 -

postgresql

2 -

Projects

7 -

python

2 -

Query

3 -

quickstart

5 -

ReactJS

1 -

redshift

10 -

release

18 -

rendering

3 -

Reporting

2 -

schedule

5 -

schedule delivery

1 -

sdk

5 -

singlevalue

1 -

snowflake

16 -

sql

221 -

system activity

3 -

table chart

1 -

tablecalcs

53 -

tests

7 -

time

8 -

time zone

4 -

totals

7 -

user access management

3 -

user-attributes

9 -

value_format

5 -

view

24 -

Views

5 -

visualizations

166 -

watch

1 -

webhook

1 -

日本語

3

- « Previous

- Next »

Twitter

Twitter