- Looker

- Looker Forums

- Modeling

- [Analytic Block] Retention Analysis

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Looker has created this block to make analyzing data easier and more efficient. This Data Block is made available on an “as is” basis, meaning there will not be updates moving forward.

About This Block

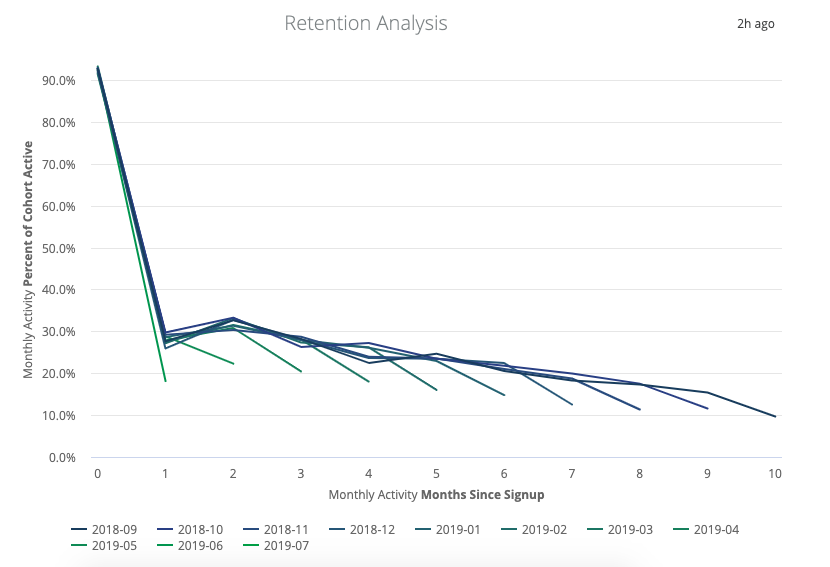

One of the most common requests we receive at Looker is for a better way to perform cohort analysis. Charts like these have become commonplace in modern data applications:

From gaming apps to retail stores, countless businesses want to understand how well they are retaining various cohorts of customers and how to effectively leverage that understanding. This means that countless businesses have also felt the pain of having to write some very complex queries.

Retention analysis works with several different data structures. The pattern below utilizes data that contains a users table and a transactions table.

Try It Yourself

It’s difficult to analyze cohort-based retention when we use traditional SQL, because we want to simultaneously see the number of users in a cohort (time-invariant) and the number of users in that cohort with activity in a given month (time-variant). So, we want to group by two different months: signup_month and activity_month. But, when we group by activity_month, we want to make sure we don’t exclude users without any activity that month. Nasty stuff.

Traditionally, the solution would be to calculate each number separately with multiple queries and then stitch the results together in Excel. But that approach isn’t flexible: It doesn’t allow us to slice and dice by all the other user attributes in the database (among other applications), and we certainly don’t get to drill in to the individual users. Seems like a waste of all that data!

Lookering for a Better Way

The trick is using derived tables to define one table with a large join. Remember, a derived table is not designed to provide an answer to an actual business question; instead, it transforms the underlying data so that, when users start exploring, they see a more meaningful result.

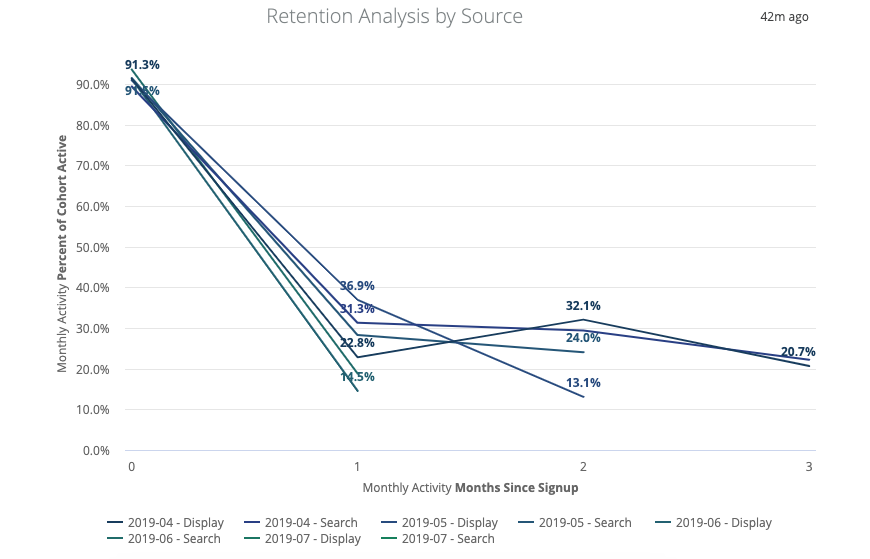

For example, we want our users to be able to explore in Looker to answer a question like this one: “For users acquired from paid search versus organic traffic, what percentage of users are still active after three months, and have those percentages been getting better or worse over time?”

To achieve this, we need the underlying data to contain a row for each user_month combination that demonstrates each user’s behavior in each month — including months with no user activity at all — and has the ability to join in all relevant user attributes. To visualize this, imagine a gaming company has users in an app.

Table 1: Users

| user_id | signup_date | acqsource |

|---|---|---|

| 1 | 1/20/15 | paid_search |

| 2 | 2/15/2015 | paid_search |

| 3 | 3/2/2015 | organic |

Table 2: App Logins

| event_id | user_id | event_date |

|---|---|---|

| 1 | 1 | 1/21/15 |

| 2 | 1 | 3/4/15 |

| 3 | 1 | 4/1/15 |

| 4 | 2 | 5/1/15 |

| 5 | 2 | 2/15/15 |

| 6 | 2 | 2/16/15 |

| 7 | 2 | 2/17/15 |

| 8 | 3 | 3/5/15 |

| 9 | 3 | 4/1/15 |

| 10 | 3 | 5/1/15 |

Now, we can use the following LookML pseudo-code to transform the data into a derived table, which gives us one row for each user-month combination and tells us about each user’s activity in that month, including months with no activity.

Starting in Looker 7.4, the

filtersparameter syntax has changed. See thefiltersparameter documentation page to view the new syntax.

view: monthly_activity {

derived_table: {

sql_trigger_value: select current_date ;;

sortkeys: ["signup_month"]

distribution: "user_id"

sql: SELECT

users.id as user_id

, date_trunc ('month', users.created_at) as signup_month

, month_list.purchase_month as purchase_month

, COALESCE(data.monthly_purchases, 0) as monthly_purchases

, COALESCE(data.total_purchase_amount, 0) as monthly_spend

, row_number() over() AS key

FROM

users

LEFT JOIN

(

SELECT

DISTINCT(date_trunc('month', order_items.created_at)) as purchase_month

FROM order_items

) as month_list

ON month_list.purchase_month >= date_trunc ('month', users.created_at) -- your dialect will vary

LEFT JOIN

(

SELECT

o.user_id

, date_trunc('month', o.created_at) as purchase_month

, COUNT(distinct o.id) AS monthly_purchases

, sum(o.sale_price) AS total_purchase_amount

FROM order_items o

GROUP BY 1,2

) as data

ON data.purchase_month = month_list.purchase_month

AND data.user_id = users.id

;;

}

dimension: user_id {

type: number

sql: ${TABLE}.user_id ;;

}

dimension_group: signup {

type: time

timeframes: [month]

sql: ${TABLE}.signup_month ;;

}

dimension_group: purchase {

type: time

timeframes: [month]

sql: ${TABLE}.purchase_month ;;

}

dimension: months_since_signup {

type: number

sql: datediff('month', ${TABLE}.signup_month, ${TABLE}.purchase_month) ;;

}

dimension: monthly_purchases {

type: number

sql: ${TABLE}.monthly_purchases ;;

}

dimension: monthly_spend {

type: number

sql: ${TABLE}.monthly_spend ;;

}

measure: total_users {

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.age, users.name, user_order_facts.lifetime_orders]

}

measure: total_active_users {

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.age, users.name, user_order_facts.lifetime_orders]

filters: {

field: monthly_purchases

value: ">0"

}

}

measure: percent_of_cohort_active {

type: number

value_format_name: percent_1

sql: 1.0 * ${total_active_users} / nullif(${total_users},0) ;;

drill_fields: [user_id, monthly_purchases, total_amount_spent]

}

measure: total_amount_spent {

type: sum

value_format_name: usd

sql: ${monthly_spend} ;;

drill_fields: [detail*]

}

measure: spend_per_user {

type: number

value_format_name: usd

sql: ${total_amount_spent} / nullif(${total_users},0) ;;

drill_fields: [user_id, monthly_purchases, total_amount_spent]

}

measure: spend_per_active_user {

type: number

value_format_name: usd

sql: ${total_amount_spent} / nullif(${total_active_users},0) ;;

drill_fields: [user_id, total_amount_spent]

}

dimension: key {

type: number

primary_key: yes

hidden: yes

sql: ${TABLE}.key ;;

}

set: detail {

fields: [user_id, signup_month, monthly_purchases, monthly_spend]

}

}

The resulting table would show this:

| user_id | first_active_month | activity_month | monthly_visits |

|---|---|---|---|

| 1 | 1/15 | 1/15 | 1 |

| 1 | 1/15 | 2/15 | 0 |

| 1 | 1/15 | 3/15 | 1 |

| 1 | 1/15 | 4/15 | 1 |

| 1 | 1/15 | 5/15 | 1 |

| 2 | 2/15 | 2/15 | 3 |

| 2 | 2/15 | 3/15 | 0 |

| 2 | 2/15 | 4/15 | 0 |

| 2 | 2/15 | 5/15 | 0 |

| 3 | 3/15 | 3/15 | 1 |

| 3 | 3/15 | 3/15 | 1 |

| 3 | 3/15 | 3/15 | 1 |

Our last step is to define this derived table view as an Explore and join our users table:

explore: user_monthly_activity {

join: users

sql_on: ${user_monthly_activity.user_id} = ${users.id}

relationship: many_to_one

}

Now, when our users select activity_month, signup_month, and percent_of_cohort_still_active, they will see the percentage of user retention by monthly activity cohort, all from a single query. Then, our users can filter or pivot by user-acquisition source, drill in to specific users, and discover trends that may impact how their marketing team chooses to spend user-acquisition dollars.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

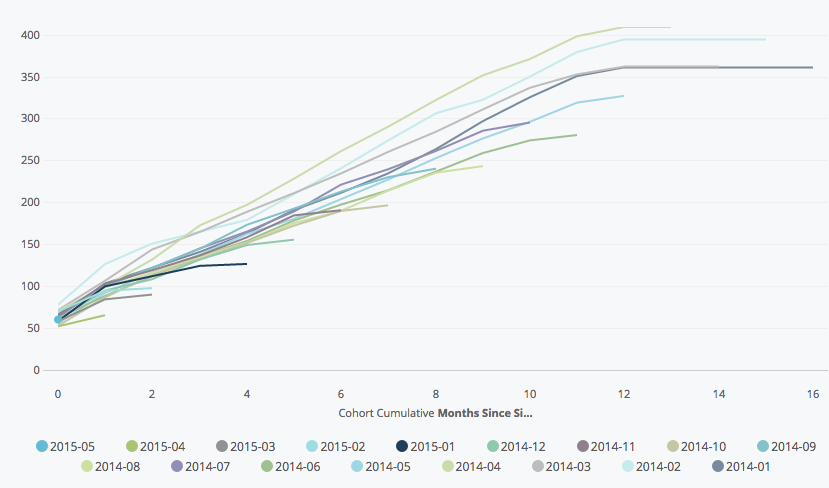

There are lots of extension to this model as well. One view that lots of folks like with cohorts is something like cumulative spend:

By simply swapping our measure from percent_active to average_spend, we have cohorted spend data. This quick PDT using inequality joins allows us to do cumulative spend:

- view: cohort_cumulative

derived_table:

sql: |

SELECT

utm1.user_id as user_id

, utm1.signup_month

, utm1.activity_month as activity_month

, SUM(utm2.monthly_orders) AS cumulative_monthly_orders

, SUM(utm2.monthly_items) as cumulative_monthly_items

, SUM(utm2.monthly_spend) as cumulative_monthly_spend

FROM ${cohort.SQL_TABLE_NAME} as utm1

LEFT JOIN ${cohort.SQL_TABLE_NAME} as utm2

ON STR_TO_DATE(CONCAT(utm2.activity_month, "-01"), "%Y-%m-%d")

<= STR_TO_DATE(CONCAT(utm1.activity_month, "-01"), "%Y-%m-%d")

AND utm1.user_id = utm2.user_id

GROUP BY 1,2,3

ORDER BY 1,2,3

indexes: [user_id]

sql_trigger_value: SELECT CURRENT_DATE()

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is there something missing from this discussion about how to pivot on "months since signup’? I am guessing you would need another dimension that would calculate the month delta between the signup month and activity month. Or is there a way to compute this on the fly?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Oliver1 That’s exactly right - it would look something like this:

- dimension: months_since_signup

type: number

sql: datediff('month',${users.created_raw},${order_items.created_raw})

By the way - it’s worth noting that a slightly simpler version of this analysis can be done without the complex PDT pattern I described above. The pattern demonstrated here is useful when you have users who may belong to a cohort but never be considered ‘active’, which requires the independent calculations of cohort size and active user counts.

If, however, you only want to include users who were active in the cohort, the following is a bit simpler: Cohort Exploration Normalized by Cohort Size (no modeling required)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is it possible to enrich the display of a retention cohort chart with the size of the cohort?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @ross2 - there’s not a super easy way to do that at the moment, but stay tuned for some upcoming chart improvements which will offer more flexibility for things like this 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Glad to hear it. Period-to-period changes in cohort composition is generally a major driver of changes to performance, so being able to display that information in a cohort display is quite important.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

One other question! When doing this in pure SQL, it would be prudent (for performance purposes) to perform the “fan join” after compressing on the relevant dimensions, rather than before. For example, if I’m interested in calculating retention for daily cohorts over weekly intervals by platform, the query would be much more performative if I first grouped by registration date, activity week, and platform, followed by ‘fanning’ the results by those dimensions to ensure that there is a record for each relevant combination of characteristics.

Is there a solution of this sort in Looker, or is it necessary to fan the data out at the atomic level?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yup, you’re right - I’ve actually been meaning to replace the SQL here with something a bit more efficient and clean. Performance isn’t a huge issue here, since the big nasty query can happen overnight, but still a good practice

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@savage Thanks for this writeup! I was messing around with this and ran into an error caused by a little typo that I thought I’d mention. In your final ‘Select’ statement, you call a column “monthy_events” and then refer to it later as “monthly_events”. Notice the missing “L” in the first reference. Keep up the awesome work, I ❤️ Looker.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Any update on this?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I’d also consider trimming off any time periods with incomplete measures (or at least giving users a toggle filter so they can do so). The “hooks” at the ends of the various trend lines can be misleading.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi - I recreated this but am noticing in my version the nulls show up on the graph rather than the lines ending on the last active month. I’ve tried doing a filter on nulls but that didn’t work. What can I do to make it so the lines cut off like in the example?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There’s an option in the visualization settings that you can un-tick called “Plot Null Values”. You can see it in the screenshot here: https://docs.looker.com/exploring-data/visualizing-query-results/line-options#plot_menu_options

Turning that off will work!

-

access grant

6 -

actionhub

1 -

Actions

8 -

Admin

7 -

Analytics Block

27 -

API

25 -

Authentication

2 -

bestpractice

7 -

BigQuery

69 -

blocks

11 -

Bug

60 -

cache

7 -

case

12 -

Certification

2 -

chart

1 -

cohort

5 -

connection

14 -

connection database

4 -

content access

2 -

content-validator

5 -

count

5 -

custom dimension

5 -

custom field

11 -

custom measure

13 -

customdimension

8 -

Customizing LookML

118 -

Dashboards

144 -

Data

7 -

Data Sources

3 -

data tab

1 -

Database

13 -

datagroup

5 -

date-formatting

12 -

dates

16 -

derivedtable

51 -

develop

4 -

development

7 -

dialect

2 -

dimension

46 -

done

9 -

download

5 -

downloading

1 -

drilling

28 -

dynamic

17 -

embed

5 -

Errors

16 -

etl

2 -

explore

58 -

Explores

5 -

extends

17 -

Extensions

9 -

feature-requests

6 -

filter

220 -

formatting

13 -

git

19 -

googlesheets

2 -

graph

1 -

group by

7 -

Hiring

2 -

html

19 -

ide

1 -

imported project

8 -

Integrations

1 -

internal db

2 -

javascript

2 -

join

16 -

json

7 -

label

6 -

link

17 -

links

8 -

liquid

154 -

Looker Studio Pro

1 -

looker_sdk

1 -

LookerStudio

3 -

lookml

859 -

lookml dashboard

20 -

LookML Foundations

54 -

looks

33 -

manage projects

1 -

map

14 -

map_layer

6 -

Marketplace

2 -

measure

22 -

merge

7 -

model

7 -

modeling

26 -

multiple select

2 -

mysql

3 -

nativederivedtable

9 -

ndt

6 -

Optimizing Performance

30 -

parameter

70 -

pdt

35 -

performance

11 -

periodoverperiod

16 -

persistence

2 -

pivot

3 -

postgresql

2 -

Projects

7 -

python

2 -

Query

3 -

quickstart

5 -

ReactJS

1 -

redshift

10 -

release

18 -

rendering

3 -

Reporting

2 -

schedule

5 -

schedule delivery

1 -

sdk

5 -

singlevalue

1 -

snowflake

16 -

sql

222 -

system activity

3 -

table chart

1 -

tablecalcs

53 -

tests

7 -

time

8 -

time zone

4 -

totals

7 -

user access management

3 -

user-attributes

9 -

value_format

5 -

view

24 -

Views

5 -

visualizations

166 -

watch

1 -

webhook

1 -

日本語

3

- « Previous

- Next »

Twitter

Twitter