- Looker

- Looker Forums

- Modeling

- [Analytic Block] Daily, Weekly, Monthly Active Use...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Looker has created this block to make analyzing data easier and more efficient. This Data Block is made available on an “as is” basis, meaning there will not be updates moving forward.

About This Block

Active Users is a common metric you can use to measure how customers are engaging with your product over different timeframes. In the Daily, Weekly, Monthly Active Users Analytical Block pattern, we use a rolling timeframe to calculate a daily count of how many users interacted with the product today, in the past seven days, and in the past 30 days. This block will allow you to answer questions like:

- How is my active customer base changing over time?

- What are the characteristics of my active users?

- How do changes to my product affect my active customer base?

Ideal Data Types

This block is a great way to analyze event data, which can come from Google Analytics, Segment, Snowplow, or a custom event-tracking system (like a table that has a row for every user event).

Expected Output

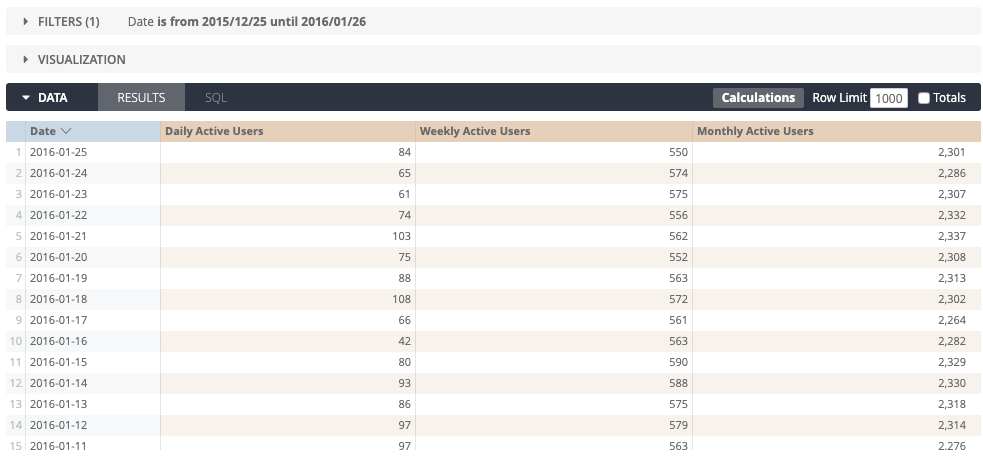

This block helps us segment customers into monthly, weekly, and daily active users — in other words, for each date, the users who have made a purchase in the last 30 days, in the last 7 days, and on that same day.

Note: This is a static data set, so we set the filters for a specific timeframe.

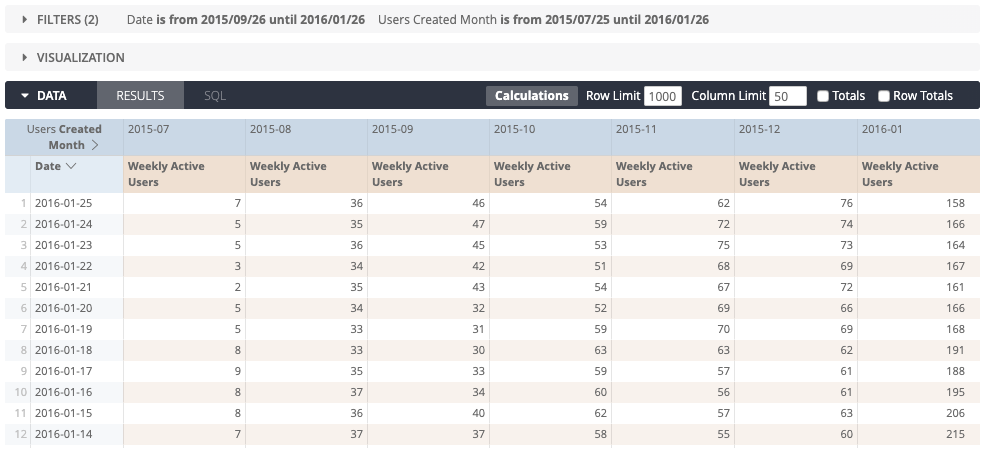

By joining a daily_use derived table (discussed in greater detail below) with our user data table, we can explore active users by any category for which we have data. For example, here are the weekly active users over the past 90 days, cohorted by their signup month:

Try It Yourself

How It’s Done

The first step is to build a date table. The construction of this table can vary based on the SQL dialect being used. In the examples below, we use Redshift, BigQuery, and Snowflake. We have Community posts for how to create this type of table in MySQL and PostgreSQL as well.

We can leverage a large table (here it’s order_items) that has more rows than we need for dates. We will use the window function row_number to generate a series of integers to build our date table.

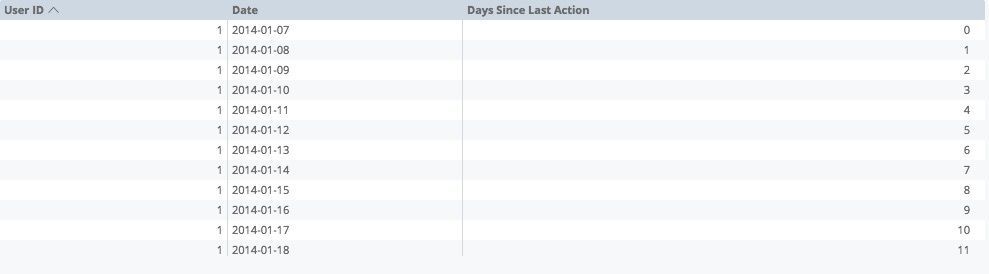

Next, we will construct a daily_use SQL-based derived table that has the user_id and date for every day a user made a purchase. In this derived table we will cross join the date table with the daily_use table, where the calendar date falls between the usage date and the usage date plus 30 days. This allows us to determine, for each calendar date, whether a user has been active up to 30 days prior. To make this table, we use the user_id, date, and how many days it’s been since the user’s last activity, which will look like this in an Explore:

Each new calendar date increments the days since last action. In the example above, we see that user 1 was active on January 7, but was not active any day after. This daily_use table is persisted in the code below to increase query performance.

Finally, we add dimensions and measures to the daily_use derived table view (called active_users) that will allow us to count and drill in on monthly, weekly, and daily active users.

Example Code

Starting in Looker 7.4, the

filtersparameter syntax has changed. See thefiltersparameter documentation page to view the new syntax.

Redshift:

explore: active_users {

join: users {

type: left_outer

sql_on: ${active_users.user_id} = ${users.id} ;;

relationship: many_to_one

}

}

view: dates {

derived_table: {

distribution_style: all

sortkeys: ["date"]

sql_trigger_value: GETDATE() ;;

sql: -- ## 1) Create a Date table with a row for each date.

SELECT '2001-01-01'::DATE + d AS date

FROM

(SELECT ROW_NUMBER() OVER(ORDER BY id) -1 AS d FROM orders ORDER BY id LIMIT 20000) AS d

;;

}

}

view: active_users {

derived_table: {

sql_trigger_value: GETDATE();;

distribution: "user_id"

sortkeys: ["date"]

sql: WITH daily_use AS (

-- ## 2 ) Create a table of days and activity by user_id

SELECT

user_id

, DATE_TRUNC('day', created_at) as activity_date

FROM orders

GROUP BY 1, 2

)

-- ## 3) Cross join activity and dates to build a row for each user/date combo with

-- days since last activity

SELECT

daily_use.user_id

, wd.date as date

, MIN(wd.date::date - daily_use.activity_date::date) as days_since_last_action

FROM ${dates.SQL_TABLE_NAME} AS wd

LEFT JOIN daily_use

ON wd.date >= daily_use.activity_date

AND wd.date < daily_use.activity_date + interval '30 day'

GROUP BY 1,2

;;

}

dimension: date {

type: date

sql: ${TABLE}.date ;;

}

dimension: user_id {

type: number

sql: ${TABLE}.user_id ;;

}

dimension: days_since_last_action {

type: number

sql: ${TABLE}.days_since_last_action ;;

value_format_name: decimal_0

}

dimension: active_this_day {

type: yesno

sql: ${days_since_last_action} < 1 ;;

}

dimension: active_last_7_days {

type: yesno

sql: ${days_since_last_action} < 7 ;;

}

measure: user_count_active_30_days {

label: "Monthly Active Users"

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.name]

}

measure: user_count_active_this_day {

label: "Daily Active Users"

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.name]

filters: {

field: active_this_day

value: "yes"

}

}

measure: user_count_active_7_days {

label: "Weekly Active Users"

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.name]

filters: {

field: active_last_7_days

value: "yes"

}

}

}

BigQuery:

view: dates {

derived_table: {

sql_trigger_value: SELECT CURRENT_DATE() ;;

sql:

SELECT cast(date as date) as date

FROM UNNEST(GENERATE_DATE_ARRAY(DATE_SUB(CURRENT_DATE, INTERVAL 5 YEAR), CURRENT_DATE)) date

;;

}

}

view: active_users {

derived_table: {

sql_trigger_value: SELECT CURRENT_DATE();;

sql: WITH daily_use AS (

SELECT

user_id as user_id

, cast(TIMESTAMP_TRUNC(transactiondate,day) as date) as activity_date

FROM users

GROUP BY 1, 2

)

SELECT

daily_use.user_id

, wd.date as date

, MIN( DATE_DIFF(wd.date, daily_use.activity_date, day) ) as days_since_last_action

FROM ${dates.SQL_TABLE_NAME} AS wd

CROSS JOIN daily_use

WHERE wd.date BETWEEN daily_use.activity_date AND DATE_ADD(daily_use.activity_date, INTERVAL 30 DAY)

GROUP BY 1,2

;;

}

dimension_group: date {

type: time

timeframes: [date,month,quarter,quarter_of_year,year,raw]

sql: CAST( ${TABLE}.date AS TIMESTAMP);;

}

dimension: user_id {

type: number

sql: ${TABLE}.user_id ;;

}

dimension: days_since_last_action {

type: number

sql: ${TABLE}.days_since_last_action ;;

value_format_name: decimal_0

}

dimension: active_this_day {

type: yesno

sql: ${days_since_last_action} < 1 ;;

}

dimension: active_last_7_days {

type: yesno

sql: ${days_since_last_action} < 7 ;;

}

measure: user_count_active_30_days {

label: "Monthly Active Users"

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.name]

}

measure: user_count_active_this_day {

label: "Daily Active Users"

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.name]

filters: {

field: active_this_day

value: "yes"

}

}

measure: user_count_active_7_days {

label: "Weekly Active Users"

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.name]

filters: {

field: active_last_7_days

value: "yes"

}

}

}

Snowflake:

view: event_active_users_pdt {

derived_table: {

sql:

WITH dau AS (

SELECT TO_DATE(CREATED_AT) as event_date, COUNT(DISTINCT USER_ID) AS dau

FROM SCHEMA.EVENTS

WHERE -- <.. filter criteria...>

GROUP BY event_date

)

SELECT event_date, dau,

(SELECT COUNT(DISTINCT USER_ID)

FROM SCHEMA.EVENTS

WHERE -- <... filter criteria...>

AND TO_DATE(CREATED_AT) BETWEEN DATEADD(DAY, -29, dau.event_date) AND dau.event_date

) AS mau,

(SELECT COUNT(DISTINCT USER_ID)

FROM SCHEMA.EVENTS

WHERE -- <... filter criteria...>

AND TO_DATE(CREATED_AT) BETWEEN DATEADD(DAY, -7, dau.event_date) AND dau.event_date

) AS wau

FROM dau

;;

datagroup_trigger: events_datagroup

}

dimension: event_active_users_pk {

type: string

primary_key: yes

hidden: yes

sql: TO_VARCHAR(${event_date}, 'YYYYMMDD');;

}

dimension_group: event {

type: time

timeframes: [

raw,

date,

day_of_week,

day_of_month,

day_of_year,

week,

week_of_year,

month,

quarter,

year

]

sql: ${TABLE}."EVENT_DATE" ;;

}

dimension: dau {

label: "DAU"

type: number

sql: ${TABLE}."DAU" ;;

}

dimension: mau {

label: "MAU"

type: number

sql: ${TABLE}."MAU" ;;

}

dimension: wau {

label: "WAU"

type: number

sql: ${TABLE}."WAU" ;;

}

dimension: dau_mau {

label: "DAU/MAU"

type: number

value_format_name: decimal_2

sql: 1.0 * (${dau} / nullif(${mau}, 0)) ;;

}

dimension: wau_mau {

label: "WAU/MAU"

type: number

value_format_name: decimal_2

sql: 1.0 * (${wau} / nullif(${mau}, 0)) ;;

}

dimension: dau_wau {

label: "DAU/MAU"

type: number

value_format_name: decimal_2

sql: 1.0 * (${dau} / nullif(${wau}, 0)) ;;

}

measure: count {

label: "Number of Records"

type: count

drill_fields: [detail*]

}

measure: average_dau_mau {

label: "Average DAU/MAU"

type: average

sql: ${dau_mau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: average_wau_mau {

label: "Average WAU/MAU"

type: average

sql: ${wau_mau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: average_dau_wau {

label: "Average DAU/WAU"

type: average

sql: ${dau_wau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: min_dau_mau {

label: "Min DAU/MAU"

type: min

sql: ${dau_mau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: min_wau_mau {

label: "Min WAU/MAU"

type: min

sql: ${wau_mau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: min_dau_wau {

label: "Min DAU/WAU"

type: min

sql: ${dau_wau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: max_dau_mau {

label: "Max DAU/MAU"

type: max

sql: ${dau_mau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: max_wau_mau {

label: "Max WAU/MAU"

type: max

sql: ${wau_mau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: max_dau_wau {

label: "Max DAU/WAU"

type: max

sql: ${dau_wau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

set: detail {

fields: [event_date, dau, mau, wau]

}

- Labels:

-

watch

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for an excellent post here by @jakeyoos!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you need it for BigQuery:

view: dates {

derived_table: {

sql_trigger_value: SELECT CURRENT_DATE() ;;

sql:

SELECT cast(date as date) as date

FROM UNNEST(GENERATE_DATE_ARRAY(DATE_SUB(CURRENT_DATE, INTERVAL 5 YEAR), CURRENT_DATE)) date

;;

}

}

view: active_users {

derived_table: {

sql_trigger_value: SELECT CURRENT_DATE();;

sql: WITH daily_use AS (

SELECT

user_id as user_id

, cast(TIMESTAMP_TRUNC(transactiondate,day) as date) as activity_date

FROM users

GROUP BY 1, 2

)

SELECT

daily_use.user_id

, wd.date as date

, MIN( DATE_DIFF(wd.date, daily_use.activity_date, day) ) as days_since_last_action

FROM ${dates.SQL_TABLE_NAME} AS wd

CROSS JOIN daily_use

WHERE wd.date BETWEEN daily_use.activity_date AND DATE_ADD(daily_use.activity_date, INTERVAL 30 DAY)

GROUP BY 1,2

;;

}

dimension_group: date {

type: time

timeframes: [date,month,quarter,quarter_of_year,year,raw]

sql: CAST( ${TABLE}.date AS TIMESTAMP);;

}

dimension: user_id {

type: number

sql: ${TABLE}.user_id ;;

}

dimension: days_since_last_action {

type: number

sql: ${TABLE}.days_since_last_action ;;

value_format_name: decimal_0

}

dimension: active_this_day {

type: yesno

sql: ${days_since_last_action} < 1 ;;

}

dimension: active_last_7_days {

type: yesno

sql: ${days_since_last_action} < 7 ;;

}

measure: user_count_active_30_days {

label: "Monthly Active Users"

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.name]

}

measure: user_count_active_this_day {

label: "Daily Active Users"

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.name]

filters: {

field: active_this_day

value: "yes"

}

}

measure: user_count_active_7_days {

label: "Weekly Active Users"

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.name]

filters: {

field: active_last_7_days

value: "yes"

}

}

}

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Here is a Snowflake View for DAU, WAU, MAU.

view: event_active_users_pdt {

derived_table: {

sql:

WITH dau AS (

SELECT TO_DATE(CREATED_AT) as event_date, COUNT(DISTINCT USER_ID) AS dau

FROM SCHEMA.EVENTS

WHERE -- <.. filter criteria...>

GROUP BY event_date

)

SELECT event_date, dau,

(SELECT COUNT(DISTINCT USER_ID)

FROM SCHEMA.EVENTS

WHERE -- <... filter criteria...>

AND TO_DATE(CREATED_AT) BETWEEN DATEADD(DAY, -29, dau.event_date) AND dau.event_date

) AS mau,

(SELECT COUNT(DISTINCT USER_ID)

FROM SCHEMA.EVENTS

WHERE -- <... filter criteria...>

AND TO_DATE(CREATED_AT) BETWEEN DATEADD(DAY, -7, dau.event_date) AND dau.event_date

) AS wau

FROM dau

;;

datagroup_trigger: events_datagroup

}

dimension: event_active_users_pk {

type: string

primary_key: yes

hidden: yes

sql: TO_VARCHAR(${event_date}, 'YYYYMMDD');;

}

dimension_group: event {

type: time

timeframes: [

raw,

date,

day_of_week,

day_of_month,

day_of_year,

week,

week_of_year,

month,

quarter,

year

]

sql: ${TABLE}."EVENT_DATE" ;;

}

dimension: dau {

label: "DAU"

type: number

sql: ${TABLE}."DAU" ;;

}

dimension: mau {

label: "MAU"

type: number

sql: ${TABLE}."MAU" ;;

}

dimension: wau {

label: "WAU"

type: number

sql: ${TABLE}."WAU" ;;

}

dimension: dau_mau {

label: "DAU/MAU"

type: number

value_format_name: decimal_2

sql: 1.0 * (${dau} / nullif(${mau}, 0)) ;;

}

dimension: wau_mau {

label: "WAU/MAU"

type: number

value_format_name: decimal_2

sql: 1.0 * (${wau} / nullif(${mau}, 0)) ;;

}

dimension: dau_wau {

label: "DAU/MAU"

type: number

value_format_name: decimal_2

sql: 1.0 * (${dau} / nullif(${wau}, 0)) ;;

}

measure: count {

label: "Number of Records"

type: count

drill_fields: [detail*]

}

measure: average_dau_mau {

label: "Average DAU/MAU"

type: average

sql: ${dau_mau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: average_wau_mau {

label: "Average WAU/MAU"

type: average

sql: ${wau_mau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: average_dau_wau {

label: "Average DAU/WAU"

type: average

sql: ${dau_wau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: min_dau_mau {

label: "Min DAU/MAU"

type: min

sql: ${dau_mau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: min_wau_mau {

label: "Min WAU/MAU"

type: min

sql: ${wau_mau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: min_dau_wau {

label: "Min DAU/WAU"

type: min

sql: ${dau_wau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: max_dau_mau {

label: "Max DAU/MAU"

type: max

sql: ${dau_mau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: max_wau_mau {

label: "Max WAU/MAU"

type: max

sql: ${wau_mau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

measure: max_dau_wau {

label: "Max DAU/WAU"

type: max

sql: ${dau_wau} ;;

value_format_name: decimal_2

drill_fields: [detail*]

}

set: detail {

fields: [event_date, dau, mau, wau]

}

}

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks @JeffH1 for the Snowflake implementation. Could you please assist me with a purely Oracle SQL way of accomplishing the same. We use Oracle SQL at work and if I can crack this logic, it will make my work so much easier.

It may not even be a full implementation; even good pointers in the right direction would be a great help. In fact, if you have ever come across a purely Oracle SQL implementation, a link would be helpful. I have scoured StackOverflow for months and I haven’t found the right logic.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If this could be done in Postgres that would be great!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

cleaned up snowflake version

view: active_users {

derived_table: {

sql: WITH dau AS (

SELECT TO_DATE("EVENT_TIME" ) as event_date,

COUNT(DISTINCT USER_ID) AS dau

FROM "SIMPLE_PROD"."EVENTS"

GROUP BY event_date

)

SELECT event_date, dau,

(SELECT COUNT(distinct USER_ID)

FROM "SIMPLE_PROD"."EVENTS"

WHERE

TO_DATE("EVENT_TIME") BETWEEN DATEADD(DAY, -29, dau.event_date) AND dau.event_date

) AS mau,

(SELECT COUNT(DISTINCT USER_ID)

FROM "SIMPLE_PROD"."EVENTS"

WHERE

TO_DATE("EVENT_TIME") BETWEEN DATEADD(DAY, -7, dau.event_date) AND dau.event_date

) AS wau

FROM dau;;

}

dimension: event_active_users_pk {

type: string

primary_key: yes

hidden: yes

sql: TO_VARCHAR(${event_date}, 'YYYYMMDD');;

}

dimension_group: event {

type: time

timeframes: [

raw,

date,

day_of_week,

day_of_month,

day_of_year,

week,

week_of_year,

month,

quarter,

year]

sql: ${TABLE}."EVENT_DATE" ;;

}

dimension: dau {

label: "DAU"

type: number

sql: ${TABLE}."DAU" ;;

}

dimension: mau {

label: "MAU"

type: number

sql: ${TABLE}."MAU" ;;

}

dimension: wau {

label: "WAU"

type: number

sql: ${TABLE}."WAU" ;;

}

dimension: dau_mau {

label: "DAU/MAU"

type: number

value_format_name: decimal_2

sql: 1.0 * (${dau} / nullif(${mau}, 0)) ;;

}

dimension: wau_mau {

label: "WAU/MAU"

type: number

value_format_name: decimal_2

sql: 1.0 * (${wau} / nullif(${mau}, 0)) ;;

}

dimension: dau_wau {

label: "DAU/MAU"

type: number

value_format_name: decimal_2

sql: 1.0 * (${dau} / nullif(${wau}, 0)) ;;

}

measure: count {

label: "Number of Records"

type: count

}

measure: average_dau_mau {

label: "Average DAU/MAU"

type: average

sql: ${dau_mau} ;;

value_format_name: decimal_2

}

measure: average_wau_mau {

label: "Average WAU/MAU"

type: average

sql: ${wau_mau} ;;

value_format_name: decimal_2

}

measure: average_dau_wau {

label: "Average DAU/WAU"

type: average

sql: ${dau_wau} ;;

value_format_name: decimal_2

}

measure: min_dau_mau {

label: "Min DAU/MAU"

type: min

sql: ${dau_mau} ;;

value_format_name: decimal_2

}

measure: min_wau_mau {

label: "Min WAU/MAU"

type: min

sql: ${wau_mau} ;;

value_format_name: decimal_2

}

measure: min_dau_wau {

label: "Min DAU/WAU"

type: min

sql: ${dau_wau} ;;

value_format_name: decimal_2

}

measure: max_dau_mau {

label: "Max DAU/MAU"

type: max

sql: ${dau_mau} ;;

value_format_name: decimal_2

}

measure: max_wau_mau {

label: "Max WAU/MAU"

type: max

sql: ${wau_mau} ;;

value_format_name: decimal_2

}

measure: max_dau_wau {

label: "Max DAU/WAU"

type: max

sql: ${dau_wau} ;;

value_format_name: decimal_2

}

drill_fields: [event_date, dau, mau, wau]

}- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Jeff_B in the snowflakes implementation , what is “Filter Criteria” ?? Also I tried the Snowflakes implementation, but the numbers are not matching with GA

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Completed this for PostGreSql and also included a Quarterly Active Users.

Hope this helps someone, like all these folks above helped me.

PS: I added a limit of 730 days, as I found this query took a long time to run compared to the other method. Also corrected the WAU, I think it should be ‘-6 day’ and not ‘-7 day’ as shown in the example.

view: active_users {

derived_table: {

sql_trigger_value: SELECT CURRENT_DATE ;;

indexes: ["event_date"]

sql: WITH dau AS

(SELECT (DATE(table."event_time")) AS event_date,

COUNT (distinct table."user_id") as dau

FROM datalake.table AS table

WHERE (DATE(table."event_time")) is not null

GROUP BY 1

ORDER BY 1 desc

LIMIT 730)

SELECT dau.event_date, dau, (SELECT COUNT (distinct table."user_id")

FROM datalake.table AS table

WHERE DATE (table."event_time")

BETWEEN (((dau.event_date) + INTERVAL '-6 day')::TIMESTAMP::DATE)

AND dau.event_date) as wau,

(SELECT COUNT (distinct table."user_id")

FROM datalake.table AS table

WHERE DATE (table."event_time")

BETWEEN (((dau.event_date) + INTERVAL '-29 day')::TIMESTAMP::DATE)

AND dau.event_date) as mau,

(SELECT COUNT (distinct table."user_id")

FROM datalake.table AS table

WHERE DATE (table."event_time")

BETWEEN (((dau.event_date) + INTERVAL '-89 day')::TIMESTAMP::DATE)

AND dau.event_date) as qau

FROM dau

;;

}- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This was the more performant method for PostGreSQL, where you cross join your active user table to a derived date table. I also checked it against the other method and my results matched one-to-one.

######### 1. DERIVED TABLE #########

view: dates {

derived_table: {

indexes: ["date"]

sql_trigger_value: SELECT CURRENT_DATE ;;

sql: SELECT generate_series('2020-01-01'::timestamp::date, current_date, '1 day')::timestamp::date as date

;;

}

}

view: active_users {

derived_table: {

sql_trigger_value: SELECT CURRENT_DATE ;;

indexes: ["date"]

sql: WITH daily_use AS (

SELECT table."user_id" AS user_id,

(table."event_time")::TIMESTAMP::DATE as activity_date

FROM ddatalake.table AS table

WHERE (DATE(table."event_time")) is not null

GROUP BY 1, 2

ORDER BY 2 DESC)

SELECT daily_use.user_id

, wd.date as date

, MIN( (wd.date::date - daily_use.activity_date::date) ) as days_since_last_action

FROM ${dates.SQL_TABLE_NAME} AS wd

CROSS JOIN daily_use

WHERE wd.date BETWEEN daily_use.activity_date AND (((daily_use.activity_date) + INTERVAL '89 day')::TIMESTAMP::DATE)

GROUP BY 1,2

;;

}

######### 2. CORE DIMENSIONS #########

dimension_group: date {

type: time

timeframes: [date,month,quarter,quarter_of_year,year,raw]

sql: CAST( ${TABLE}.date AS TIMESTAMP);;

}

dimension: user_id {

type: number

sql: ${TABLE}.user_id ;;

}

dimension: days_since_last_action {

type: number

sql: ${TABLE}.days_since_last_action ;;

value_format_name: decimal_0

}

######## 3. CUSTOM DIMENSIONS ########

dimension: active_this_day {

type: yesno

sql: ${days_since_last_action} < 1 ;;

}

dimension: active_last_7_days {

type: yesno

sql: ${days_since_last_action} < 7 ;;

}

dimension: active_last_30_days {

type: yesno

sql: ${days_since_last_action} < 30 ;;

}

############ 4a. MEASURES ############

measure: user_count_active_this_day {

label: "Daily Active Users"

group_label: "DAU, WAU, MAU & QAU Measures"

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.name]

filters: {

field: active_this_day

value: "yes"

}

}

measure: user_count_active_7_days {

label: "Weekly Active Users"

group_label: "DAU, WAU, MAU & QAU Measures"

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.name]

filters: {

field: active_last_7_days

value: "yes"

}

}

measure: user_count_active_30_days {

label: "Monthly Active Users"

group_label: "DAU, WAU, MAU & QAU Measures"

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.name]

filters: {

field: active_last_30_days

value: "yes"

}

}

measure: user_count_active_90_days {

label: "Quarterly Active Users"

group_label: "DAU, WAU, MAU & QAU Measures"

type: count_distinct

sql: ${user_id} ;;

drill_fields: [users.id, users.name]

}

############ 4b. MEASURES ###########

measure: user_activness {

label: "Count of User Activity"

type: count # Count the users multiple times to get activeness level

# sql: ${user_id} ;;

drill_fields: [users.id, users.name]

filters: {

field: active_this_day

value: "yes"

}

}

}-

access grant

6 -

actionhub

1 -

Actions

8 -

Admin

7 -

Analytics Block

27 -

API

25 -

Authentication

2 -

bestpractice

7 -

BigQuery

69 -

blocks

11 -

Bug

60 -

cache

7 -

case

12 -

Certification

2 -

chart

1 -

cohort

5 -

connection

14 -

connection database

4 -

content access

2 -

content-validator

5 -

count

5 -

custom dimension

5 -

custom field

11 -

custom measure

13 -

customdimension

8 -

Customizing LookML

116 -

Dashboards

144 -

Data

7 -

Data Sources

3 -

data tab

1 -

Database

13 -

datagroup

5 -

date-formatting

12 -

dates

16 -

derivedtable

51 -

develop

4 -

development

7 -

dialect

2 -

dimension

46 -

done

9 -

download

5 -

downloading

1 -

drilling

28 -

dynamic

17 -

embed

5 -

Errors

16 -

etl

2 -

explore

58 -

Explores

5 -

extends

17 -

Extensions

9 -

feature-requests

6 -

filter

220 -

formatting

13 -

git

19 -

googlesheets

2 -

graph

1 -

group by

7 -

Hiring

2 -

html

19 -

ide

1 -

imported project

8 -

Integrations

1 -

internal db

2 -

javascript

2 -

join

16 -

json

7 -

label

6 -

link

17 -

links

8 -

liquid

154 -

Looker Studio Pro

1 -

looker_sdk

1 -

LookerStudio

3 -

lookml

859 -

lookml dashboard

20 -

LookML Foundations

54 -

looks

33 -

manage projects

1 -

map

14 -

map_layer

6 -

Marketplace

2 -

measure

22 -

merge

7 -

model

7 -

modeling

26 -

multiple select

2 -

mysql

3 -

nativederivedtable

9 -

ndt

6 -

Optimizing Performance

29 -

parameter

70 -

pdt

35 -

performance

11 -

periodoverperiod

16 -

persistence

2 -

pivot

3 -

postgresql

2 -

Projects

7 -

python

2 -

Query

3 -

quickstart

5 -

ReactJS

1 -

redshift

10 -

release

18 -

rendering

3 -

Reporting

2 -

schedule

5 -

schedule delivery

1 -

sdk

5 -

singlevalue

1 -

snowflake

16 -

sql

221 -

system activity

3 -

table chart

1 -

tablecalcs

53 -

tests

7 -

time

8 -

time zone

4 -

totals

7 -

user access management

3 -

user-attributes

9 -

value_format

5 -

view

24 -

Views

5 -

visualizations

166 -

watch

1 -

webhook

1 -

日本語

3

- « Previous

- Next »

Twitter

Twitter