- Looker

- Looker Forums

- Developing Applications

- Write the result of a Looker query to BigQuery wit...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

日本語版もご覧になります:Cloud Functionsを使用してLookerで実行したクエリの結果をBigQueryにロードする

Updated: August 2022

Hi all,

I am sharing the code to send a Looker query to BigQuery with Cloud Functions.

- Static solution (using Looker Python SDK, BigQuery API, and Cloud Functions): There is no option in Looker UI, and the query id or look id is hardcoded into Cloud Functions. Use this option to only allow certain queries to be sent to BigQuery (i.e.: you want to send data from System Activity to BigQuery as a workaround for ETL). Link to code example

- Dynamic solution (using Looker Action API, BigQuery API, and Cloud Functions): There is an option for Looker users to select “BigQuery” as a destination for their queries in the UI (same menu with “Schedule” or “Send). Use this option to allow users to send any queries to BigQuery using the Looker UI. Link to video and code example

Potential use cases

- Workaround for System Activity ETL: Get data from Looker’s System Activity and write to BigQuery directly. These BigQuery tables can then be registered as a connection in Looker for additional LookML data modeling. Currently, Looker's System Activity stores a maximum of 100k rows, or 90 days of historical query and event data

- Transfer data from a different database to BigQuery

Considerations

- Cloud Function is easy to set up, and suitable for light-weighted, on-the-fly tasks. For heavy workloads, consider using Looker's native actions (sending to Google Cloud Storage, S3) or additional ETL/ELT tools (such as GCP's Dataflow)

- Consider using Elite System Activity for more flexibility on System Activity

Let me know if you have any questions and feedback

- Labels:

-

API

-

BigQuery

-

etl

-

sdk

-

system activity

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Lan,

This is great stuff!

I have a question: how do we pass the *.ini file that contains client and secret client id into cloud functions.

I see the code “looker_sdk.init40()” but we are not passing the ini file here. Please help me understand this.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

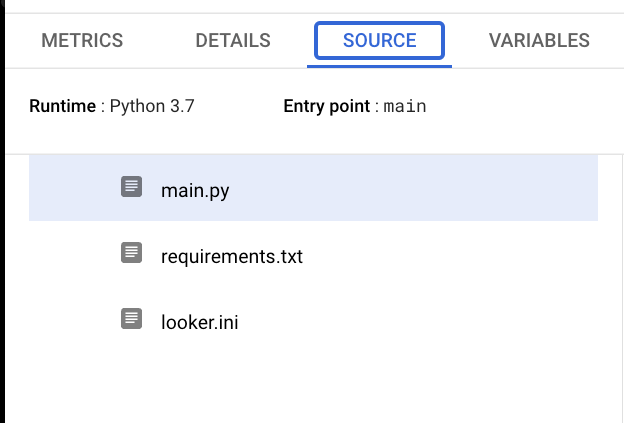

Hi @Hari-P, from the IDE, I made a new file called “looker.ini”. Inside that looker.ini file, I declare the following variables

api_version=4.0

base_url=foo

client_id=foo

client_secret=foo

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Lan,

Before running the cloud function , we have to create table in bigquery with matching column names of looker cloums,

How to we know the looker column names.

In given example (main.py) the code as below

Def get_data_from_looker (query_id = 1)

How this interct with system activity data and get the json data.

My case : If I want to get the total system activity data into bigquery, is this code work well with out any changes.

In Looker explore , we have lot of system activity explores like Content Usage,

DB Connection,

Dashboard,

Event,

History,

Look,

Merge Query,

Role,

Sql Query,

User,

Schedule Plan,

PDT Builds,

Field Usage

How can I get all these data into Bigquery.

If I want to get SQL Query explore data , what changes needs to be done to this given main.py.

Thank you

Srinivasarao

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Srinivasarao,

def main(request):

get_data_from_looker()

write_to_file()

load_to_bq()

return("Successfully loaded data from Looker to BigQuery")

get_data_from_looker(query_id=1): # I hardcoded the query id here since this is a prototype. What I did is that I run a query in the UI and go to system activity to grab the query id. In your case, I think you could manually select all fields in an explore, and run that query once, then go to system activity, and get the query id (query id is unchanged in Looker if there is no change to the query itself). This step would probably require manual work.

def load_to_bq(): # I know the fields in my query id, so I prepared the table with the exact column name in advanced. In your case, you could:

1. Use the lookml model explore endpoint to grab all of the column names from an explore in system activity

2. Use this function from BigQuery client to automatically create table with column names

Note: System Activity only runs 1 query at time, so you probably want to space out, and not run all queries at once.

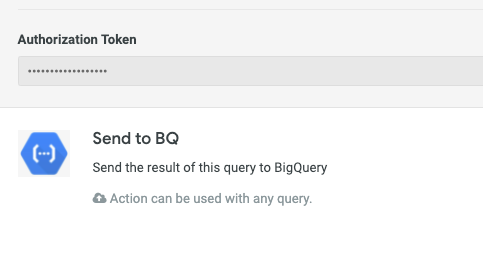

I am also developing a custom action hub to send a query result to BigQuery directly from Looker UI, and hopefully I can share soon.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @lantrann,

Thanks for this contribution! I am doing something very similar for a project I’m working on with my team. However, we got stuck for an authentication problem. I described my problem in more details here.

Essentially, it seems that the Cloud Function needs to allow unauthenticated access in order to be triggered from Looker. Is this true? It strikes me as strange, especially if you have to put this in production.

I didn’t write this in the linked post, but when I try to add my custom action hub I receive the following error: “Your client does not have permission to get URL <code>/list_actions/</code> from this server. 403 Forbidden”.

It then asks me to refresh the authorization token, but I have no idea what that could be.

I’m asking you since you may have gone through similar problems. Thanks in any case!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @mattia_mancasso,

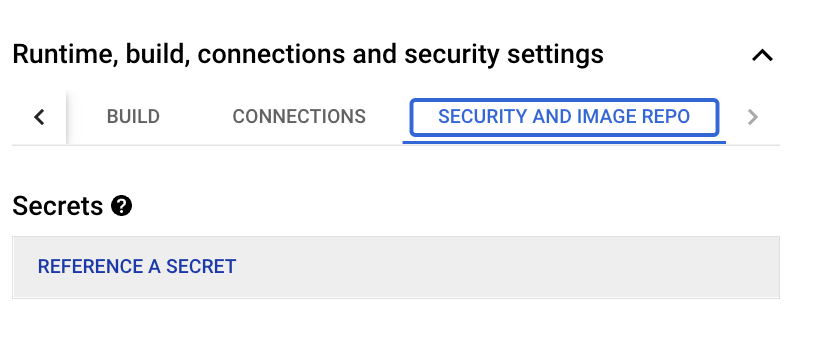

Essentially, it seems that the Cloud Function needs to allow unauthenticated access in order to be triggered from Looker → Yes, this is true (as of writing, March 2022). One workaround is to use GCP secret manager to generate an access token to prevent non-Looker users from triggering the function.

Step 1: Generate Secret Key from Cloud Functions UI

Step 2: Go to Admin → Actions, and you would be able to input the secret in authorization token here.

For your specific use case: There is a parameter called “user_attribute_param” for action hub, so you could (1) change your GCP secret keys on a schedule (2) use Looker API to update the value of the user attributes to match that secret keys and add a logic inside your code (if secret == user_attributes then execute)

https://community.looker.com/looker-api-77/setting-user-attribute-using-looker-api-28100user_attribute_param | Passes a user attribute to the receiving server. You must add the data action’s url value to the URL Allowlist for Data Actions if you are using a user_attribute_param for your data action. |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If these all do not fulfill your security requirements, then you can spin up a whole new server and use IP allowlist. We do have source code (node js) for action hubs in our repository here

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @lantrann ,

Thank you for your contribution, this is very helpful

Any update on “I am also developing a custom action hub to send a query result to BigQuery directly from Looker UI, and hopefully I can share soon. “?

Thank you.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @lantrann ,

Thank you for your contribution, this is very helpful

Any update on “I am also developing a custom action hub to send a query result to BigQuery directly from Looker UI, and hopefully I can share soon. “?

Thank you.

Hi @Assaf_Lowenstei, thanks for checking on the solution! Here is the update:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @lantrann ,

I am trying to invoke cloud function from Looker, but function is not getting triggered.

I have set allow_unathenticated access as well. But I am not able to set principle allusers for cloud invoker access. When I tried, I am getting pop up window as IAM policy update failed.

Can you please confirm without setting allusers principle, we will not be able to trigger from Looker?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@lantrann Any update on how to invoke a Cloud Function from Looker's Action Hub without opening the endpoint to "allUsers"? This is a blocker for our project, we can't use "allUsers".

-

access grant

1 -

actionhub

6 -

actions

66 -

Admin

3 -

alert

1 -

Analytics Block

15 -

API

159 -

Authentication

8 -

BigQuery

7 -

blocks

2 -

boards

4 -

Bug

23 -

cache

2 -

Components

40 -

connection

5 -

connection database

2 -

content access

1 -

content-validator

3 -

customdimension

1 -

Dashboards

92 -

Data

4 -

Data Sources

1 -

Database

3 -

deprecated

1 -

derivedtable

2 -

develop

2 -

development

2 -

done

3 -

downloading

1 -

drilling

3 -

dynamic

2 -

embed

60 -

Embedding

36 -

Errors

6 -

etl

2 -

explore

4 -

Explores

4 -

Extensions

15 -

feature-requests

2 -

filed

1 -

filter

35 -

folders

2 -

git

7 -

Google Sheets

1 -

googlesheets

1 -

i__looker

1 -

Integrations

1 -

javascript

5 -

json

2 -

links

1 -

liquid

1 -

Looker API

79 -

LookerStudio

1 -

lookml

38 -

lookml dashboard

3 -

looks

11 -

Marketplace

9 -

merge

2 -

modeling

1 -

mysql

1 -

Networking

1 -

on-hold

1 -

parameter

2 -

pdf

3 -

pdt

2 -

permission management

3 -

postgresql

1 -

Projects

1 -

python

26 -

pythonsdk

7 -

Query

11 -

query manager

2 -

quickstart

1 -

ReactJS

10 -

redshift

3 -

release

6 -

schedule

16 -

schedule delivery

2 -

sdk

56 -

server configuration

1 -

sharing

1 -

snowflake

2 -

sql

7 -

SSO

7 -

system activity

2 -

tests

1 -

Ui

1 -

user access management

3 -

user management

5 -

user-attributes

12 -

Views

2 -

visualizations

44 -

watch

1 -

webhook

6

- « Previous

- Next »

Twitter

Twitter