- Google Cloud

- Articles & Information

- Cloud Product Articles

- DevRel CI/CD Pipeline for Jenkins: The Missing Man...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Article History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

The Apigee DevRel CI/CD multibranch pipeline reference implementation is a good functionally complete showcase to illustrate the best practices around building and testing Apigee proxy bundles.

Configuring a Jenkins pipeline that fits your needs is still a daunting task to master, especially for novices. Instructions are comprehensive yet terse, https://github.com/apigee/devrel/tree/main/references/cicd-pipeline#orchestration-using-jenkins.

The job itself is flexible yet it requires some good Jenkins background to allow for confident re-use.

There are many reasons why an API Developer who used Jenkins Jobs might find Jenkins Pipelines unwieldy and bewildering. Yet they are the future for a solid contemporary CI/CD foundation. And with this article, there is no excuse not to add them to your SDLC repertoire.

In a nutshell a Jenkins Pipeline is a Groovy code stored in a Jenkinsfile file you keep in a repository alongside with your code. Being a DSL, it is interpreted by Jenkins and rendered as a Jenkins Job. This approach increases the level of automation of your CI/CD process.

For those who are just starting their Apigee at Jenkins journey, we will start from the beginning and progress step-by-step.

In the first part of the article we will manually build an Apigee Proxy Jenkins Job using Jenkins UI. We are going to use a sample proxy which is a part of the Apigee Deploy Maven Plugin project.

Secondly, we will create a Jenkins Job for a proxy, provided with the CI/CD DevRel repository.

Thirdly, we will configure a CI/CD DevRel Multibranch Pipeline reference that contains many useful linters and plugins.

In every case, we will inspect a respective proxy repository to identify and collect information required to create a Jenkins build job/pipeline.

Jenkins Plugins

You need to make sure that the following plugins are installed:

- Multi-Branch Pipeline

- HTML Publisher

- Cucumber Reports

- Mask Passwords, https://www.jenkins.io/doc/pipeline/steps/mask-passwords/

- Rebuild, https://plugins.jenkins.io/rebuild/

Server authentication: Service Accounts and Machine Users

To interact with the Apigee Management API, we need proper authentication arraignments in place. For Jenkins server being a server that would mean some kind of credentials.

For Apigee Edge/On-Premises, that would be Username/Password (Bad idea!) or OAuth2 access token.

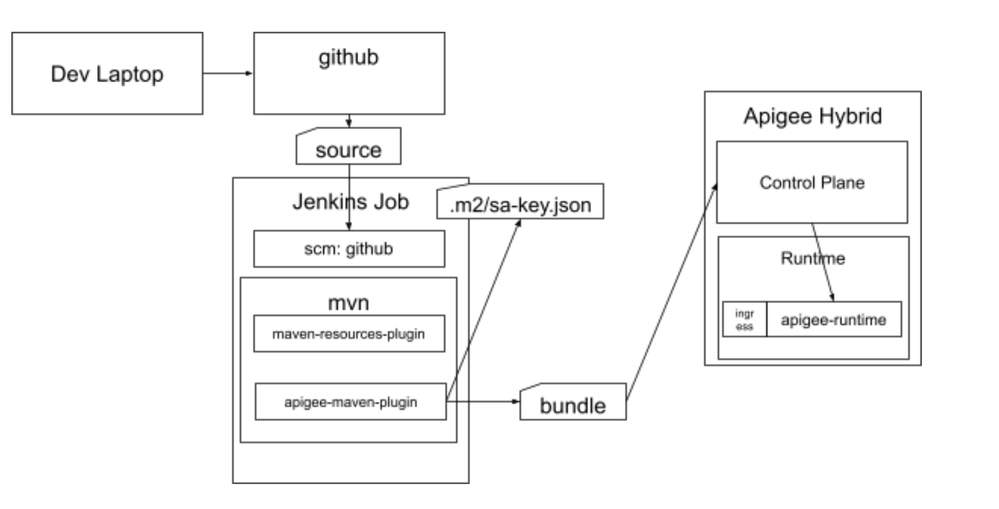

For Apigee X/hybrid that is a service account that is used to generate an access token. Depending on a scenario, there are different ways to define a service account. If you are using GCE instances, you can associate the instance with a service account and access scopes settings.

You can of course place a service account key file at a file system in a Jenkins user directory with secure (0400) file permissions. That however would require you to have access to the Jenkins VM.

Jenkins is highly flexible in terms of credentials configuration. You can have global, user and/or credentials at a folder level. Credentials defined on a folder can only be used by builds within that folder. This can be configured via Jenkins UI and/or via Jenkinsfile for pipelines.

See the following link for details:

https://docs.cloudbees.com/docs/cloudbees-ci/latest/cloud-secure-guide/injecting-secrets

Jenkins GCP Service Account Key: File System

1. Create a Jenkins' Service Account and assign roles/apigee.environmentAdmin and roles/apigee.apiAdmin roles.

2. Create and move the SA Key json file to the jenkins home directory. In this example we use the ~/.ssh directory and assign a 0400 permissions for the file.

# ls -ls

total 4

4 -r--------. 1 jenkins jenkins 2320 Feb 14 19:18 apigee-hybrid-org-240fb1f84d78.jsonJenkins GCP Service Account Key: Credentials Secret file

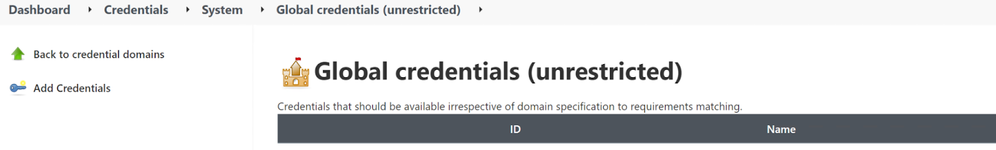

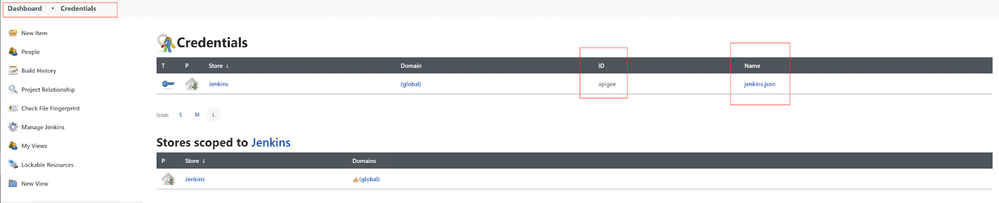

For a Global Domain credentials,

1. In the Jenkins UI, open the Dashboard, Credentials page. Select:

Dashboard/Manage Jenkins

Manage Credentials

2. Click on (global) domain link.

3. Click the Add Credentials link in the left-side menu.

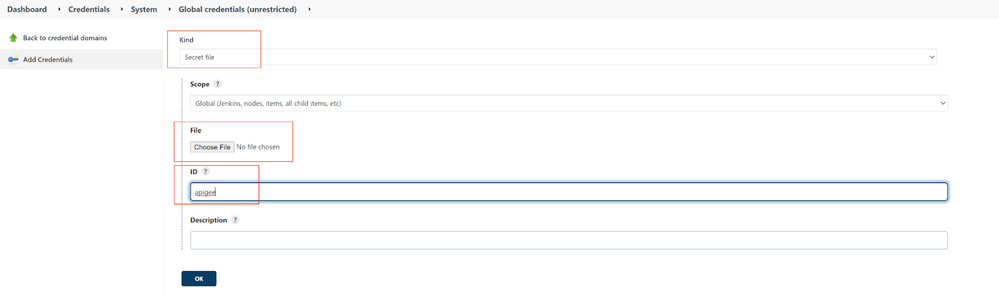

4. Select

* Secret file Kind;

* ID: apigee;

* Use file picker to select your SA json key file.

Jenkins Apigee Job: step-by-step

Apigee Deploy Maven Plugin github repository contains a sample proxy. We are going to use it to manually create a Jenkins job.

There are different versions of the plugin for Edge/Private Cloud and X/Hybrid.

For Hybrid, this is the correct link: https://github.com/apigee/apigee-deploy-maven-plugin/tree/hybrid.

Let's look at the pertinent information.

The proxy is built and deployed by a pom.xml file. This refers to a parent directory shared-pom.xml, as it is the usual DRY technique used by many developers.

A couple of lines to pay attention to:

Lines 75, 76 define version 2.x of the Deploy plugin. Version 2.x supports X/Hybrid.

<artifactId>apigee-edge-maven-plugin</artifactId>

<version>2.3.1</version>

Maven profile test defines a number of parameters that define Apigee instance configuration and credentials that are used by the plugin to communicate with Management API. Lines 98-112

To create a jenkins job that executes the deploy maven plugin:

- On the Jenkins' Dashboard page, click the New Item button and select Freestyle project type.

Name it: mock-v1

Click OK.

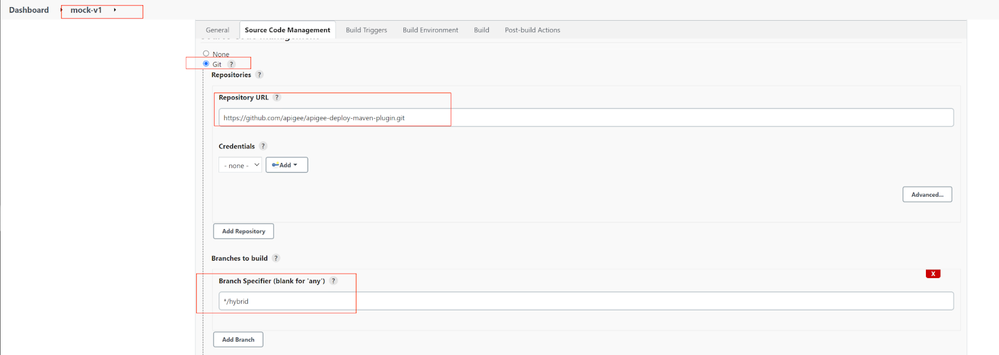

- In the Source Code Management tab, select Git radio-button and enter the plugin repository URL:

https://github.com/apigee/apigee-deploy-maven-plugin.git- In the Branches to build/Branch Specifier, enter

*/hybrid

- In the Build tab, press Add build step and select Invoke top-level Maven targets

Populate the Goals field

install -Ptest -Dorg=apigee-hybrid-org -Denv=test -Dfile=/var/lib/jenkins/.m2/apigee-org-jenkins-sa.jsonThe -D passed properties override values of the profile properties that point to our Apigee organization and environment, as well as the location of the SA key json file.

- Press Save

- In the left-hand menu of the mock-v1 job, Press the Build Now button.

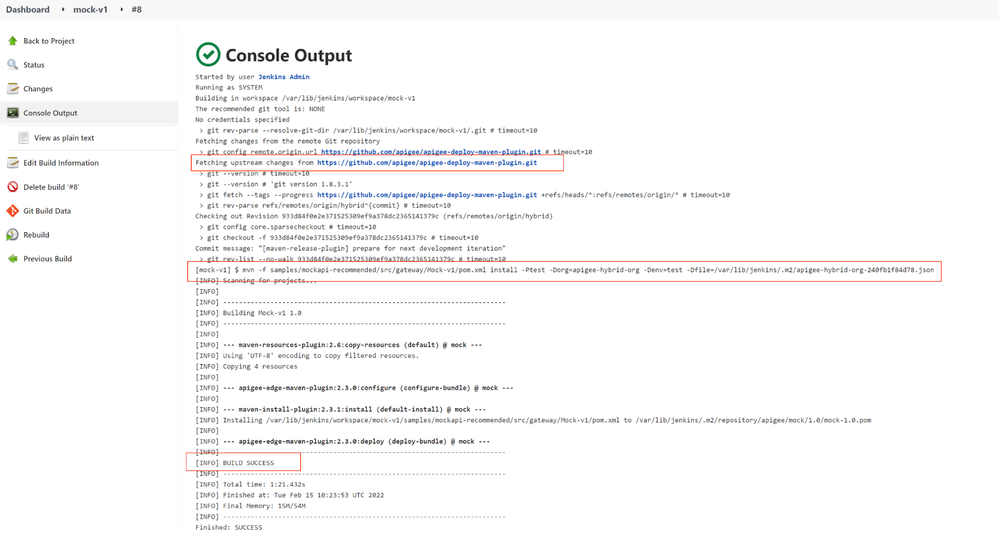

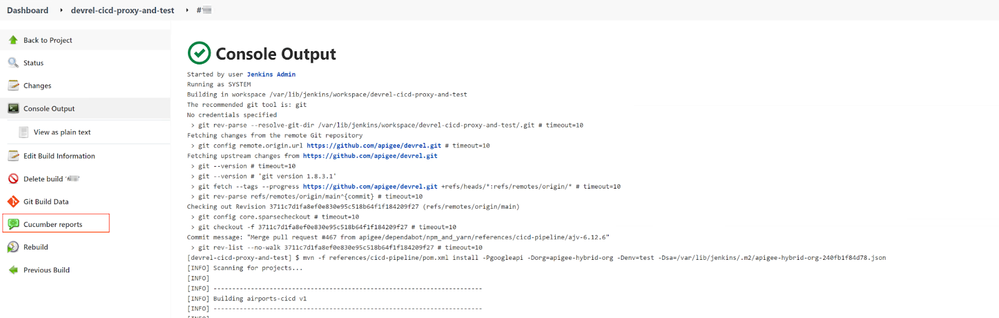

- Inspect Console Output to make sure the build executed successfully.

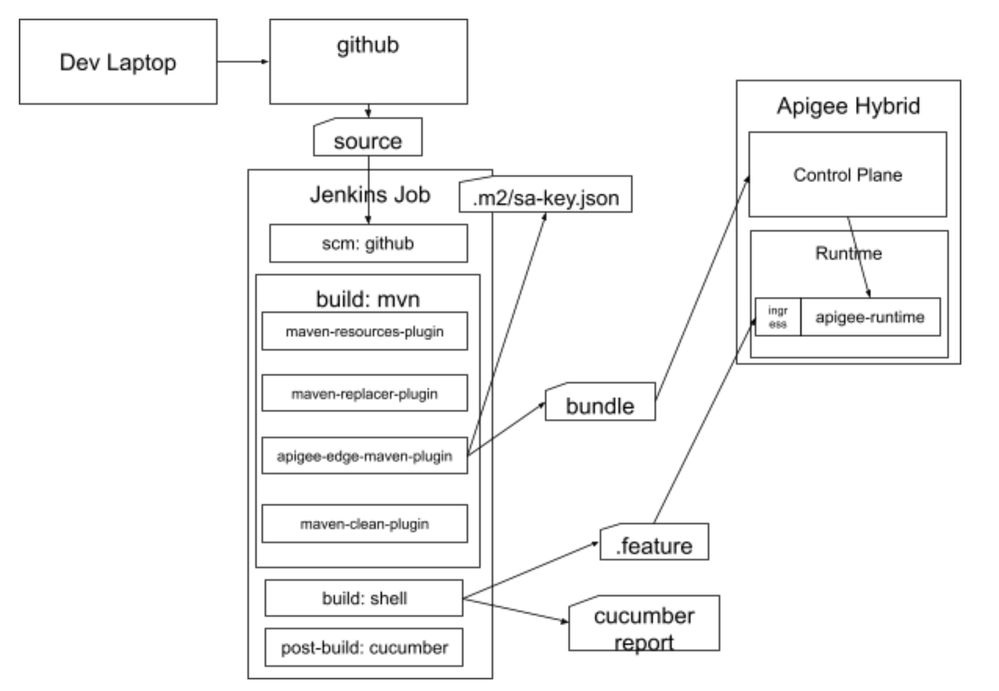

Jenkins Job: Proxy and BDD Tests, end-to-end

Let's move to the DevRel cicd-pipeline repository, https://github.com/apigee/devrel.git.

We are going to manually create a Jenkins job that builds a proxy bundle, deploys it and then runs BDD tests against it.

https://github.com/apigee/devrel/blob/main/references/cicd-pipeline/pom.xml

A different developer designed this proxy's pom file. All the relevant elements of the pom file are the same, but there are syntactical differences like profile properties names or arrangements to build and invoke apickli/cucumber nodejs project.

To create a Jenkins freeform project job:

- Press New Item and select Freeform project Job type.

Name it: devrel-cicd-proxy-and-test

- Under the Source Code Management tab, select the Git option of the radio-button and populate the Repository URL field:

https://github.com/apigee/devrel.git- Specify Branches to build:

*/main- In the Build section, add an Invoke top-level Maven targets build step.

- Populate the Goals field

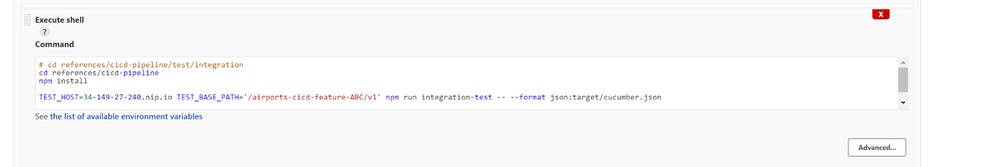

install -Pgoogleapi -Dorg=apigee-hybrid-org -Denv=test -Dsa=/var/lib/jenkins/.m2/apigee-jenkins-sa.json- In the Build section, add an Execute shell build step and populate the command field with following bash script snippet

# cd references/cicd-pipeline/test/integration

cd references/cicd-pipeline

npm install

TEST_HOST=34-149-27-240.nip.io TEST_BASE_PATH='/airports-cicd-feature-ABC/v1' npm run integration-test -- --format json:target/cucumber.json

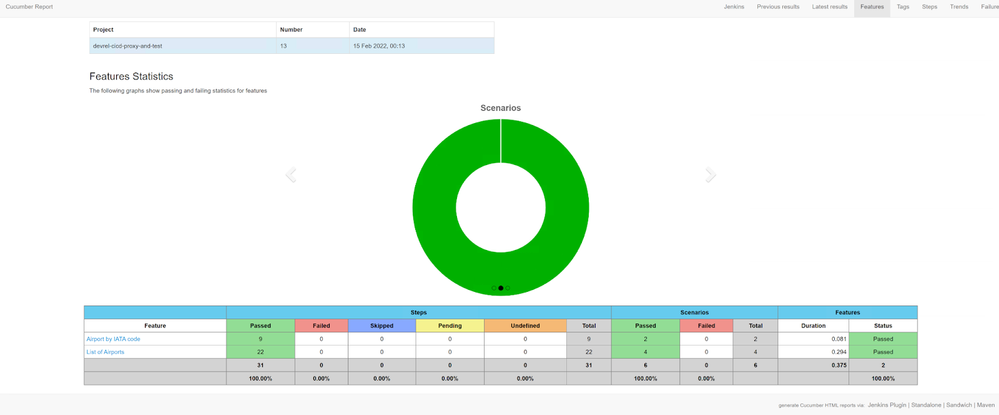

- In the Post-build Actions section, add Cucumber reports post-build action.

- Press the Save button.

Jenkins Job will be saved, built and reports will be generated. After you refresh the page, a new Cucumber reports link will show.

- Click on the Cucumber reports link to investigate the BDD test results.

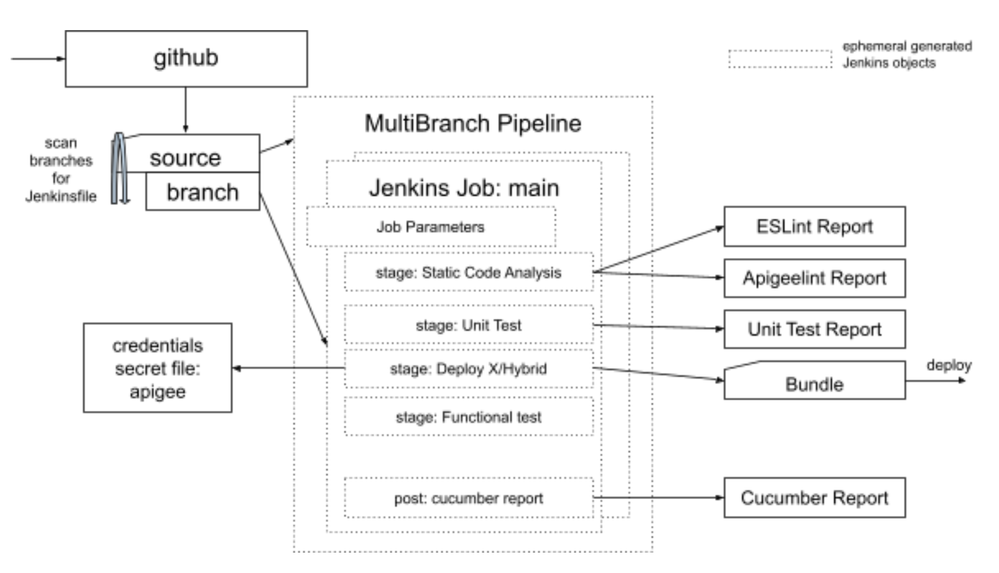

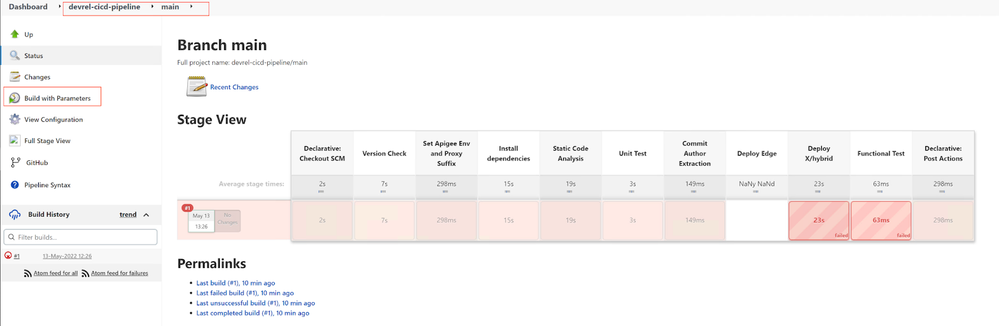

Jenkins Multibranch pipeline: composable job guided tour

The Jenkins Multibranch pipeline is a powerful way to dynamically create and execute your Jenkins jobs.

The idea is simple: bootstrapping and an executable DSL. Every repository branch is scanned. A Jenkinsfile is an executable script written in DSL at Groovy that corresponds to every Jenkins job element and is rendered as a manually created Jenkins job.

Build with Parameters

The rendered job can be edited, but will be overwritten next time the script is fetched and built. It is better to be treated as read-only for reference only. Even the button to open a rendered job configuration is called View Configuration.

Many Jenkins job properties like Build parameters are not available upon the first run, but afterwards they are properly reflected in the web interface. They can be changed and re-built using the Rebuild plugin. This creates an efficient and comfortable debugging environment.

After the parameters are fine-tuned, they can go to your source to be automatically populated.

In the case of demoing a Jenkins Apigee job, we can use this feature to re-use the DevRel Jenkins Multibranch pipeline and then manually reconfigure the job to deploy a proxy into our desired target environment. We are going to employ this technique later during the demo part.

Precedence of Parameters and Environment Variables

Nodejs Application in a nested folder structure

The DevRel repository is a monorepo (https://en.wikipedia.org/wiki/Monorepo). Amongst other considerations, this also means that our CI/CD Pipeline project is nested in a directory structure and its top level is not a project level.

When we created a manual job, a script that builds nodejs project used a cd command to switch a current directory and run `npm install` command with no explicit directory option in it.

For the multibranch pipeline's Jenkinsfile we are going to use `stage{dir(){...}}` idiom in every stage/steps pipeline element that expects the correct current working directory. To improve flexibility and reusability of the configuration, we also treat a working directory as a parameter and/or environment variable.

Define WORK_DIR_P and its default value in the parameters section:

string(name: 'WORK_DIR_P', defaultValue: 'references/cicd-pipeline')If an env variable is provided, use it:

if( ! env.WORK_DIR ){ env.WORK_DIR = params.WORK_DIR_P }Switch to the current working directory for the step duration:

steps { dir( "${env.WORK_DIR}" ) {

...

} }

Jenkins Job GCP Service Account Authentication Precedence for Apigee X/Hybrid

The pipeline design accommodates different ways to provide the authentication configuration for the apigee deploy maven plugin.

For the Apigee X/Hybrid variants, you can supply:

* Access Token

* VM Service Account and Workload Identity implicit Service Account json key file

* Jenkins Secret file Scope explicit Service Account json key file

The VM Service Account is a secure way of GCP Compute instance, equivalent to Workload Identity GKE option, that would automatically rotate the json key.

The Jenkins Secret file is a way to control a specific SA json key file in a safe manner as per Best Security Practices on a Jenkins server. You would need to take care about its rotation.

The access token (APIGEE_TOKEN variable) is a way to profile ad-hoc credentials for a build run either via parameters or via environment variables.

The pipe-line.sh script uses this way to pass the access token to a dockerized container (https://github.com/apigee/devrel/blob/main/references/cicd-pipeline/pipeline.sh).

TOKEN=$(gcloud auth print-access-token)

...

docker run \

...

-e GCP_SA_AUTH="token" \

-e APIGEE_TOKEN="$TOKEN" \

...

-e GCP_SA_AUTH="token" \

-e APIGEE_TOKEN="$TOKEN" \

...

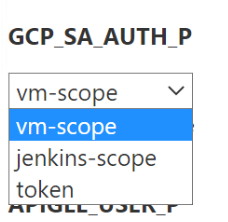

The Choice parameter statement defines vm-scope as a default parameter value.

The if statement in the Set Apigee Env and Proxy Suffix stage allows the GCP_SA_AUTH to be controlled by an environment variable.

if( ! env.GCP_SA_AUTH ){ env.GCP_SA_AUTH = params.GCP_SA_AUTH_P }The choices are defined in the parameters{} section:

choice(name: 'GCP_SA_AUTH_P', choices: [ "vm-scope", "jenkins-scope", "token" ], description: 'GCP SA/Token Scope' )

and are rendered as a build parameter combo-box:

jenkins-scope is a default value if GCP_SA_AUTH_P parameter or GCP_SA_AUTH environment variable are omitted.

The following pipeline code snippet controls which way is chosen by

// Token precedence: env var; jenkins-scope sa; vm-scope sa; token;

script {

if (env.GCP_SA_AUTH == "jenkins-scope") {

withCredentials([file(credentialsId: 'apigee', variable: 'GOOGLE_APPLICATION_CREDENTIALS')]) {

env.SA_TOKEN=sh(script:'gcloud auth print-access-token', returnStdout: true).trim()

}

} else if (env.GCP_SA_AUTH == "token") {

env.SA_TOKEN=env.APIGEE_TOKEN

} else { // vm-scope

env.SA_TOKEN=sh(script:'gcloud auth application-default print-access-token', returnStdout: true).trim()

}

The code defines the precedence order: jenkins-scope sa; vm-scope sa; token;

The build outputs an effective authentication schema value useful for troubleshooting.

println "Apigee Authentication Schema: " + env.GCP_SA_AUTHExample output:

Apigee Authentication Schema: jenkins-scopeAnother good security practice to stick to is to mask your credentials like password or token in a console output.

This is implemented via Mask Passwords jenkins plugin in the pipeline code, the Deploy X/Hybrid stage:

wrap([$class: 'MaskPasswordsBuildWrapper', varPasswordPairs: [[password: env.SA_TOKEN, var: 'SA_TOKEN']]]) {

sh """

mvn clean install \

...

-Dtoken="${env.SA_TOKEN}" \

...

"""

}Jenkins Multibranch pipeline: create and build

We are going to set up a Jenkins Multibranch pipeline. It will scan the repository and will generate a job from the main branch. As this is a DevRel's repository, it will fail during proxy deployment because of wrong org/env and SA values. We then will build the job with parameters and supply correct parameters for our environment. The job will build successfully.

- In the Jenkins Dashboard, click on a New Item button in the left-side menu.

- Enter the item name `devrel-cicd-pipeline` and select Multibranch Pipeline type of job. Click OK.

The new pipeline configuration page will open.

- In the Branch Sources tab, click on Add source combo-box button. Chose the GitHub option.

- Enter the DevRel repository URL

https://github.com/apigee/devrel.git- In the Build Configuration tab, make sure the Mode is `by Jenkinsfile` and populate the Script Path field with a relative path to the Jenkinsfile location

references/cicd-pipeline/ci-config/jenkins/Jenkinsfile- Click on Save Button at the bottom of the page.

The save will trigger:

* a repository scan;

* will discover Jenkinsfile in the main branch;

* will process it.

Checking branches...

Getting remote branches...

Checking branch main

Getting remote pull requests...

‘references/cicd-pipeline/ci-config/jenkins/Jenkinsfile’ found

Met criteria

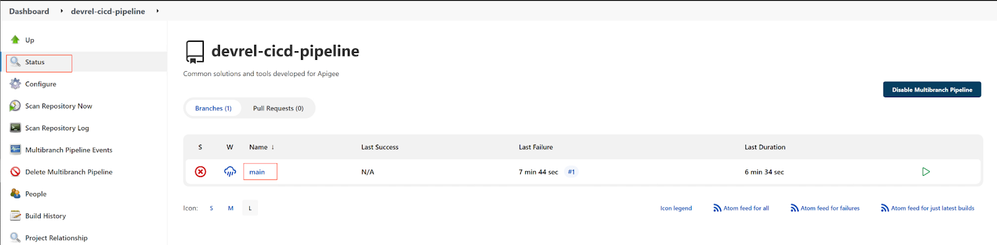

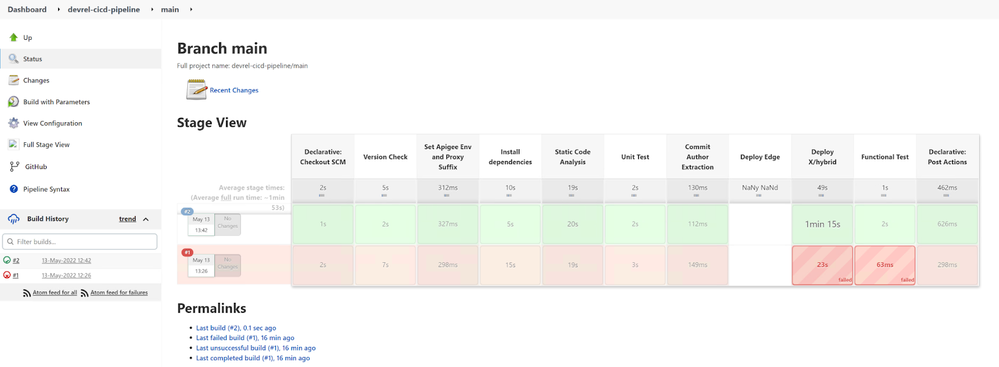

Then the `devrel-cicd-pipeline >> main` job will start to build, most of the stages and steps will build successfully, but the Deploy X/Hybrid step will fail with an authentication failure. That is expected, as the job parameters/environment variables are not correct for our organization.

- Click on the Status menu item of the pipeline project and then the failed main job:

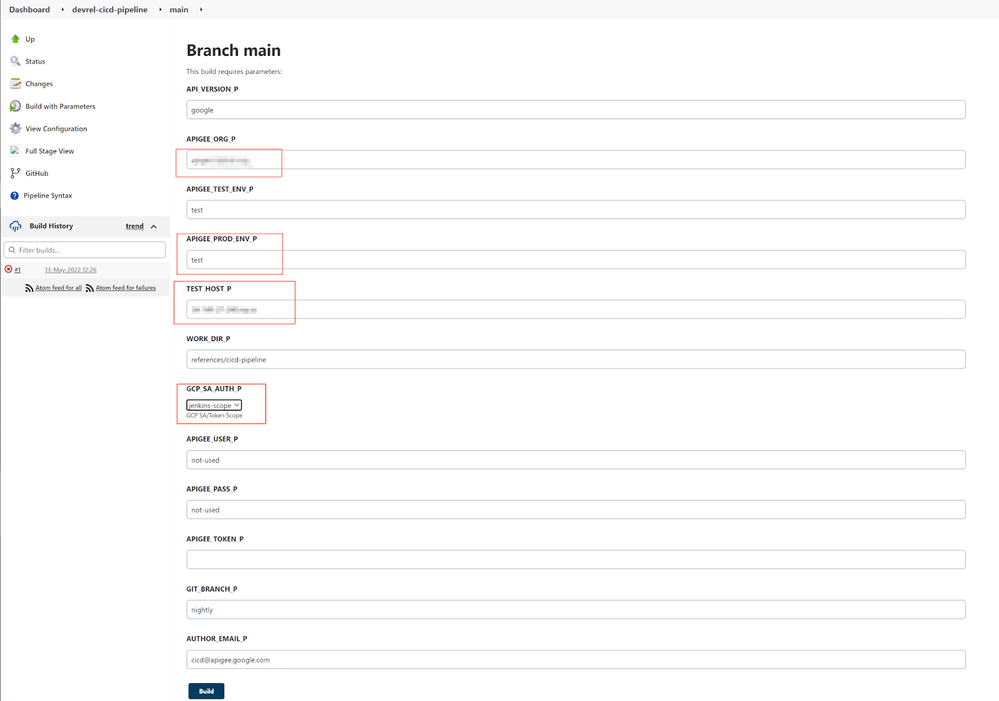

After the build finishes, a new option Build with Parameters will appear.

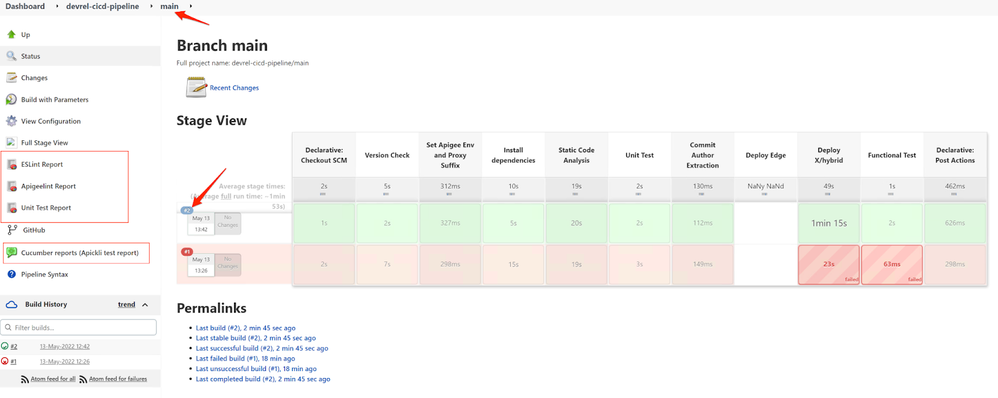

- Click on the Build with Parameters link.

Populate correct values for the Apigee_ORG_P, APIGEE_PROD_ENV_P, TEST_HOST_P (hostname only!) and change the GCP_SA_AUTH_P to jenkins-scope option.

- Press Build

The new build will finish successfully.

- Refresh the build page. For example, click on the main link in the breadcrumb trail.

New links for the reports will appear.

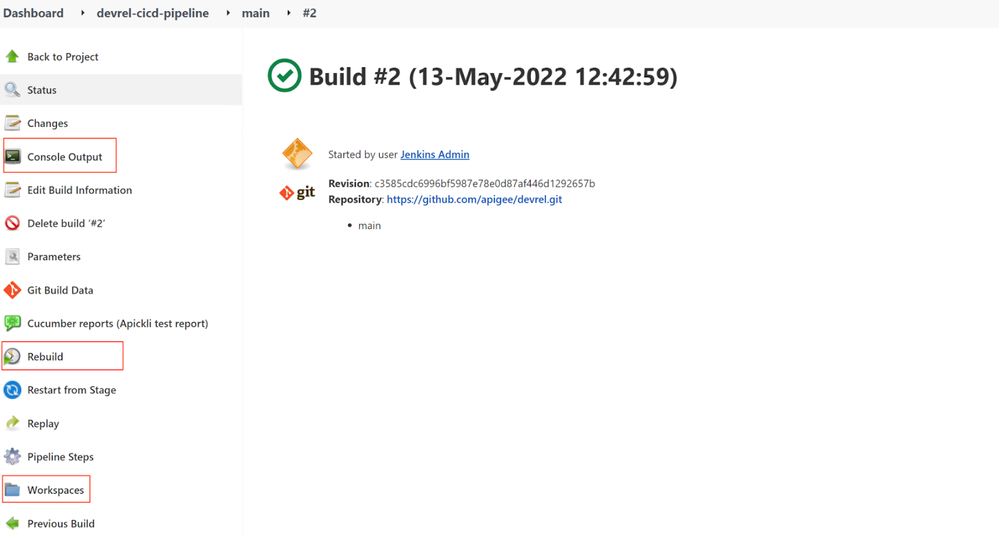

- Click on the build number #2 to open the build details.

As expected, the Console Output and Workspaces left-side menu links will be there.

If a rebuild plugin is installed, the Rebuild button will be provided to build this job with the same set of parameters.

Twitter

Twitter