- Looker

- Looker Forums

- Exploring & Curating Data

- Stats table calcs + Timeline viz = Easy A/B Testin...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Disclaimer: The statistical methods in this post are Bayesian. I strongly prefer Bayesian statistics over frequentist statistics, and I think you should too. But, that’s a post in and of itself, and there are plenty of resources easily found on the internet that do it better than I could, so I will leave it at this - if you do any statistics, but aren’t yet familiar with Bayesian statistics, please take some time to read up on them!

Looker 4.22 ships with a ton of statistical functions, including several inverse distribution functions for conjugate priors. If that didn’t make any sense and you just want to run an A/B test, worry not & read on.

Let’s say you have randomly assigned visitors to one of several variants, and collected some data like this:

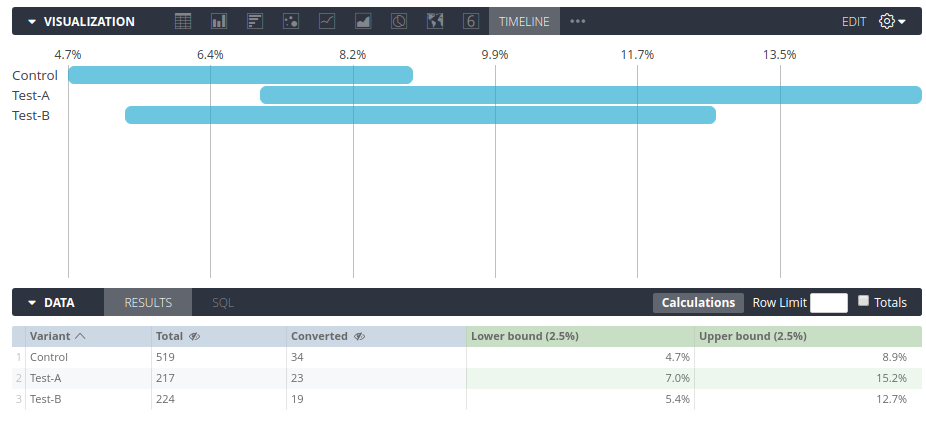

Variant | Visitors | Converted

------------------------------------------

Control | 519 | 34

Test-A | 217 | 23

Test-B | 224 | 19

What can we conclude from this using Bayesian statistics? We can build credibility intervals that tell us (for example, with 95% probability) where the true conversion rate may lie for each of these variants, using the following table calculations:

beta_inv(

0.025,

${...converted} + 0.5,

${...total} - ${...converted} + 0.5

)

beta_inv(

0.975,

${...converted} + 0.5,

${...total} - ${...converted} + 0.5

)

After adding these calculations, hiding the two columns we don’t want to visualize, and selecting the “Timeline” visualization, we get the following

From here, we can easily compare the probable conversion rates and decide to go forward with a given variant, to continue collecting more data, or to drop the experiment and try something else (for example, if the possible improvement isn’t substantial enough).

That’s it, that easy! Go A/B test!

PS. I now have another article which provides examples of comparing rates of events over time rather than proportions, and also goes into a little more detail on the theory behind conjugate priors.

Bonus: In theory, you could even use the Looker API to do Thompson sampling, to automatically weight how you assign new partitipants, by sorting your variants on the following formula and picking the top one:

beta_inv(

rand(),

${...converted} + 0.5,

${...total} - ${...converted} + 0.5

)

In practice, however, it would be better for performance to just figure out a ratio once in a while, and then apply that ratio to a whole batch of participants, rather than hitting the API for each participant 😉

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In order to get the % formatting here on the visualization, you can use excel markdown in the visualization settings. For example, if you enter 0.00% you’ll get percent to the second decimal place.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Fabio,

Why have you added 0.5 to your alpha and beta parameters?

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Lookerat : Beta(0.5, 0.5) is the Jeffreys' Prior (a common non-informative prior) for the Bernoulli distribution and the Beta-Bernoulli update has a closed form solution which is Beta(posterior_alpha = prior_alpha + successes, posterior_beta = prior_beta + failures)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, this is really useful for visualising our A/B-tests. Is there a way to calculate the probability that Test A has higher conversion rate than Control? I guess that this probability is much higher than 5%? That probability must be that both Test A has lower conversion rate than the interval and/or Control has higher conversion rate than the interval.

-

access grant

4 -

actionhub

9 -

Actions

14 -

Admin

4 -

alert

29 -

Analytics

2 -

Analytics Block

35 -

Analytics General

1 -

API

12 -

bar

10 -

bestpractice

4 -

BigQuery

8 -

blocks

1 -

boards

4 -

Bug

168 -

cache

2 -

case

2 -

chart

17 -

cohort

1 -

connection

5 -

connection database

1 -

content access

1 -

content-validator

2 -

count

6 -

custom dimension

9 -

custom field

19 -

custom measure

8 -

customdimension

9 -

Dashboards

753 -

Data

5 -

Data Sources

4 -

data tab

4 -

Database

5 -

datagroup

2 -

date-formatting

14 -

dates

18 -

derivedtable

1 -

develop

1 -

development

3 -

dimension

17 -

done

8 -

download

19 -

downloading

9 -

drill-down

1 -

drilling

30 -

dynamic

1 -

embed

10 -

Errors

13 -

etl

1 -

explore

84 -

Explores

134 -

extends

1 -

feature-requests

10 -

filed

3 -

filter

245 -

Filtering

118 -

folders

4 -

formatting

19 -

git

2 -

Google Data Studio

2 -

Google Sheets

2 -

googlesheets

7 -

graph

9 -

group by

6 -

html

12 -

i__looker

1 -

imported project

2 -

Integrations

4 -

javascript

2 -

join

2 -

json

3 -

label

4 -

line chart

17 -

link

5 -

links

3 -

liquid

22 -

Looker

6 -

Looker Studio Pro

47 -

LookerStudio

7 -

lookml

169 -

lookml dashboard

15 -

looks

188 -

manage projects

1 -

map

30 -

map_layer

5 -

Marketplace

4 -

measure

4 -

Memorystore for Memcached

1 -

merge

14 -

model

3 -

modeling

2 -

multiple select

1 -

ndt

1 -

parameter

11 -

pdf

8 -

pdt

8 -

performance

7 -

periodoverperiod

5 -

permission management

1 -

persistence

1 -

pivot

21 -

postgresql

1 -

python

2 -

pythonsdk

2 -

Query

3 -

quickstart

4 -

ReactJS

1 -

redshift

4 -

release

16 -

rendering

8 -

Reporting

10 -

schedule

51 -

schedule delivery

5 -

sdk

1 -

Security

4 -

sharing

2 -

singlevalue

16 -

snowflake

3 -

sql

24 -

SSO

1 -

stacked chart

10 -

system activity

5 -

table chart

16 -

tablecalcs

144 -

Tile

12 -

time

8 -

time zone

3 -

totals

13 -

Training

1 -

Ui

19 -

usage

4 -

user access management

3 -

user management

3 -

user-attributes

6 -

value_format

4 -

view

4 -

Views

4 -

visualizations

558 -

watch

1 -

webhook

2

- « Previous

- Next »

Twitter

Twitter