- Looker

- Looker Forums

- Exploring & Curating Data

- Export selected dashboard data to BigQuery

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi there,

We want to enable our users to write the selected data of a dashboard to a BigQuery table. The rationale is simple:

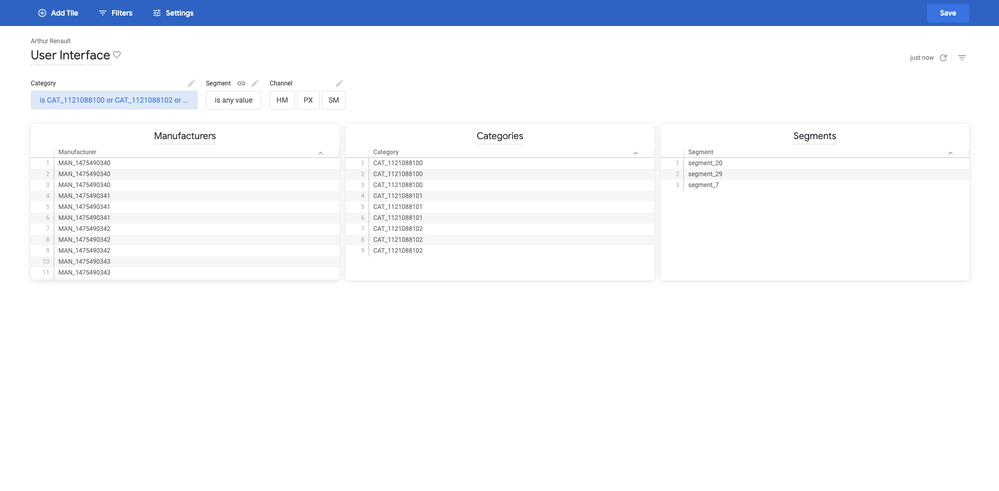

- The users select the scope of their analyses by filtering the data (see illustrative screenshot).

- They push the selected data to BigQuery.

- Our data science team runs ML models based on the selected inputs.

I found 2 topics discussing data export to BigQuery but I did not manage to adapt the code to our use case.

- https://community.looker.com/sql-10/update-write-back-data-on-bigquery-from-looks-9408

Updates a BigQuery table from a Look but based on a single field value.

- https://community.looker.com/open-source-projects-78/export-the-results-of-a-looker-query-to-bigquer...

Updates a BigQuery table based on all selected values but from an Explore.

In our scenario we want our users to push to BigQuery all the selected data, directly from the dashboard.

How can we enable it?

Best,

Arthur

- Labels:

-

BigQuery

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Arthur,

I’ve got three solutions for you to consider 🙂

- You could write a small server (I like to use Google Cloud Functions for this, they’re very convenient) that receives requests from Looker’s “send/schedule” modal using our Action API. Your action would declare a supported action type of “dashboard” and a supported format of “csv_zip”. One downside of this approach is that the data would actually have to be in its granular form (that the ML uses) as a tile on the dashboard, which may or may not be the UX you want for you users

- If you are embedding (or could embed) the dashboard into a parent application, you can listen for our iframe’s JS events to know the current state of the dashboard filters, and then offer the user a button that uses our REST API to run the equivalent queries and process the data from there.

- The coolest option, but also the untested bleeding edge - If you are training a supported ML model, you could have a tile on your dashboard built on top of a derived table that uses a BQML create model statement, along with templated filters to pass in the filtered data and train the model right inline with your queries to BQ. I would also probably add a yes/no “train?” filter to the top of the dashboard so that users only run the training tile once they’re satisfied with their other filters. I wrote an article about using BQML from Looker, but this doesn’t get into making the table dynamic to user input - that would require some experimentation

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Fabio,

Thanks for your answer, it is very informative!

I think your first option should work perfectly well for our use case. I feel confident using Cloud Functions to act as an Action Hub for Looker. What is still unclear to me is:

- Based on the documentation I guess that I will get 1 csv file per tile. The file names will be accessible through the fields “attachment” then “data” of the request. Am I right?

- My Execute endpoint function will do the work: read the files and saves the content to BigQuery, as well as some authentication checks probably. Where is the zip directory actually located? How to delete it once the job is done?

FYI: your 3rd option is what we are heading toward so it is really cool to see what you have achieved using both Looker & BQML!

Have a good day,

Arthur

-

access grant

4 -

actionhub

9 -

actions

14 -

Admin

4 -

alert

29 -

Analytics

2 -

Analytics Block

35 -

Analytics General

1 -

API

12 -

bar

10 -

bestpractice

4 -

BigQuery

8 -

blocks

1 -

boards

4 -

Bug

168 -

cache

2 -

case

2 -

chart

17 -

cohort

1 -

connection

5 -

connection database

1 -

content access

1 -

content-validator

2 -

count

6 -

custom dimension

9 -

custom field

19 -

custom measure

8 -

customdimension

9 -

Dashboards

753 -

Data

5 -

Data Sources

4 -

data tab

4 -

Database

5 -

datagroup

2 -

date-formatting

14 -

dates

18 -

derivedtable

1 -

develop

1 -

development

3 -

dimension

17 -

done

8 -

download

19 -

downloading

9 -

drill-down

1 -

drilling

30 -

dynamic

1 -

embed

10 -

Errors

13 -

etl

1 -

explore

84 -

Explores

134 -

extends

1 -

feature-requests

10 -

filed

3 -

filter

245 -

Filtering

117 -

folders

4 -

formatting

19 -

git

2 -

Google Data Studio

2 -

Google Sheets

2 -

googlesheets

7 -

graph

9 -

group by

6 -

html

12 -

i__looker

1 -

imported project

2 -

Integrations

4 -

javascript

2 -

join

2 -

json

3 -

label

4 -

line chart

17 -

link

5 -

links

3 -

liquid

22 -

Looker

6 -

Looker Studio Pro

47 -

LookerStudio

7 -

lookml

169 -

lookml dashboard

15 -

looks

188 -

manage projects

1 -

map

30 -

map_layer

5 -

Marketplace

4 -

measure

4 -

Memorystore for Memcached

1 -

merge

14 -

model

3 -

modeling

2 -

multiple select

1 -

ndt

1 -

parameter

11 -

pdf

8 -

pdt

8 -

performance

7 -

periodoverperiod

5 -

permission management

1 -

persistence

1 -

pivot

21 -

postgresql

1 -

python

2 -

pythonsdk

2 -

Query

3 -

quickstart

4 -

ReactJS

1 -

redshift

4 -

release

16 -

rendering

8 -

Reporting

10 -

schedule

51 -

schedule delivery

5 -

sdk

1 -

Security

4 -

sharing

2 -

singlevalue

16 -

snowflake

3 -

sql

24 -

SSO

1 -

stacked chart

10 -

system activity

5 -

table chart

16 -

tablecalcs

144 -

Tile

12 -

time

8 -

time zone

3 -

totals

13 -

Training

1 -

Ui

19 -

usage

4 -

user access management

3 -

user management

3 -

user-attributes

6 -

value_format

4 -

view

4 -

Views

4 -

visualizations

558 -

watch

1 -

webhook

2

- « Previous

- Next »

Twitter

Twitter